Differential Logic and Dynamic Systems

Author: Jon Awbrey

|

| Stand and unfold yourself. | Hamlet: Francsico—1.1.2 |

This article develops a differential extension of propositional calculus and applies it to a context of problems arising in dynamic systems. The work pursued here is coordinated with a parallel application that focuses on neural network systems, but the dependencies are arranged to make the present article the main and the more self-contained work, to serve as a conceptual frame and a technical background for the network project.

Review and Transition

This note continues a previous discussion on the problem of dealing with change and diversity in logic-based intelligent systems. It is useful to begin by summarizing essential material from previous reports.

Table 1 outlines a notation for propositional calculus based on two types of logical connectives, both of variable \(k\)-ary scope.

- A bracketed list of propositional expressions in the form \(\texttt{(} e_1, e_2, \ldots, e_{k-1}, e_k \texttt{)}\) indicates that exactly one of the propositions \(e_1, e_2, \ldots, e_{k-1}, e_k\) is false.

- A concatenation of propositional expressions in the form \(e_1 ~ e_2 ~ \ldots ~ e_{k-1} ~ e_k\) indicates that all of the propositions \(e_1, e_2, \ldots, e_{k-1}, e_k\) are true, in other words, that their logical conjunction is true.

All other propositional connectives can be obtained in a very efficient style of representation through combinations of these two forms. Strictly speaking, the concatenation form is dispensable in light of the bracketed form, but it is convenient to maintain it as an abbreviation of more complicated bracket expressions.

This treatment of propositional logic is derived from the work of C.S. Peirce [P1, P2], who gave this approach an extensive development in his graphical systems of predicate, relational, and modal logic [Rob]. More recently, these ideas were revived and supplemented in an alternative interpretation by George Spencer-Brown [SpB]. Both of these authors used other forms of enclosure where I use parentheses, but the structural topologies of expression and the functional varieties of interpretation are fundamentally the same.

While working with expressions solely in propositional calculus, it is easiest to use plain parentheses for logical connectives. In contexts where parentheses are needed for other purposes “teletype” parentheses \(\texttt{(} \ldots \texttt{)}\) or barred parentheses \((\!| \ldots |\!)\) may be used for logical operators.

The briefest expression for logical truth is the empty word, usually denoted by \({}^{\backprime\backprime} \boldsymbol\varepsilon {}^{\prime\prime}\) or \({}^{\backprime\backprime} \boldsymbol\lambda {}^{\prime\prime}\) in formal languages, where it forms the identity element for concatenation. To make it visible in this text, it may be denoted by the equivalent expression \({}^{\backprime\backprime} \texttt{((} ~ \texttt{))} {}^{\prime\prime},\) or, especially if operating in an algebraic context, by a simple \({}^{\backprime\backprime} 1 {}^{\prime\prime}.\) Also when working in an algebraic mode, the plus sign \({}^{\backprime\backprime} + {}^{\prime\prime}\) may be used for exclusive disjunction. For example, we have the following paraphrases of algebraic expressions by bracket expressions:

|

\(\begin{matrix} x + y ~=~ \texttt{(} x, y \texttt{)} \\[6pt] x + y + z ~=~ \texttt{((} x, y \texttt{)}, z \texttt{)} ~=~ \texttt{(} x, \texttt{(} y, z \texttt{))} \end{matrix}\) |

It is important to note that the last expressions are not equivalent to the triple bracket \(\texttt{(} x, y, z \texttt{)}.\)

| \(\text{Expression}\) | \(\text{Interpretation}\) | \(\text{Other Notations}\) |

| \(\text{True}\) | \(1\) | |

| \(\texttt{(} ~ \texttt{)}\) | \(\text{False}\) | \(0\) |

| \(x\) | \(x\) | \(x\) |

| \(\texttt{(} x \texttt{)}\) | \(\text{Not}~ x\) |

\(\begin{matrix} x' \\ \tilde{x} \\ \lnot x \end{matrix}\) |

| \(x~y~z\) | \(x ~\text{and}~ y ~\text{and}~ z\) | \(x \land y \land z\) |

| \(\texttt{((} x \texttt{)(} y \texttt{)(} z \texttt{))}\) | \(x ~\text{or}~ y ~\text{or}~ z\) | \(x \lor y \lor z\) |

| \(\texttt{(} x ~ \texttt{(} y \texttt{))}\) |

\(\begin{matrix} x ~\text{implies}~ y \\ \mathrm{If}~ x ~\text{then}~ y \end{matrix}\) |

\(x \Rightarrow y\) |

| \(\texttt{(} x \texttt{,} y \texttt{)}\) |

\(\begin{matrix} x ~\text{not equal to}~ y \\ x ~\text{exclusive or}~ y \end{matrix}\) |

\(\begin{matrix} x \ne y \\ x + y \end{matrix}\) |

| \(\texttt{((} x \texttt{,} y \texttt{))}\) |

\(\begin{matrix} x ~\text{is equal to}~ y \\ x ~\text{if and only if}~ y \end{matrix}\) |

\(\begin{matrix} x = y \\ x \Leftrightarrow y \end{matrix}\) |

| \(\texttt{(} x \texttt{,} y \texttt{,} z \texttt{)}\) |

\(\begin{matrix} \text{Just one of} \\ x, y, z \\ \text{is false}. \end{matrix}\) |

\(\begin{matrix} x'y~z~ & \lor \\ x~y'z~ & \lor \\ x~y~z' & \end{matrix}\) |

| \(\texttt{((} x \texttt{),(} y \texttt{),(} z \texttt{))}\) |

\(\begin{matrix} \text{Just one of} \\ x, y, z \\ \text{is true}. \\ & \\ \text{Partition all} \\ \text{into}~ x, y, z. \end{matrix}\) |

\(\begin{matrix} x~y'z' & \lor \\ x'y~z' & \lor \\ x'y'z~ & \end{matrix}\) |

|

\(\begin{matrix} \texttt{((} x \texttt{,} y \texttt{),} z \texttt{)} \\ & \\ \texttt{(} x \texttt{,(} y \texttt{,} z \texttt{))} \end{matrix}\) |

\(\begin{matrix} \text{Oddly many of} \\ x, y, z \\ \text{are true}. \end{matrix}\) |

\(x + y + z\)

\(\begin{matrix} x~y~z~ & \lor \\ x~y'z' & \lor \\ x'y~z' & \lor \\ x'y'z~ & \end{matrix}\) |

| \(\texttt{(} w \texttt{,(} x \texttt{),(} y \texttt{),(} z \texttt{))}\) |

\(\begin{matrix} \text{Partition}~ w \\ \text{into}~ x, y, z. \\ & \\ \text{Genus}~ w ~\text{comprises} \\ \text{species}~ x, y, z. \end{matrix}\) |

\(\begin{matrix} w'x'y'z' & \lor \\ w~x~y'z' & \lor \\ w~x'y~z' & \lor \\ w~x'y'z~ & \end{matrix}\) |

Note. The usage that one often sees, of a plus sign "\(+\)" to represent inclusive disjunction, and the reference to this operation as boolean addition, is a misnomer on at least two counts. Boole used the plus sign to represent exclusive disjunction (at any rate, an operation of aggregation restricted in its logical interpretation to cases where the represented sets are disjoint (Boole, 32)), as any mathematician with a sensitivity to the ring and field properties of algebra would do:

The expression \(x + y\) seems indeed uninterpretable, unless it be assumed that the things represented by \(x\) and the things represented by \(y\) are entirely separate; that they embrace no individuals in common. (Boole, 66).

It was only later that Peirce and Jevons treated inclusive disjunction as a fundamental operation, but these authors, with a respect for the algebraic properties that were already associated with the plus sign, used a variety of other symbols for inclusive disjunction (Sty, 177, 189). It seems to have been Schröder who later reassigned the plus sign to inclusive disjunction (Sty, 208). Additional information, discussion, and references can be found in (Boole) and (Sty, 177–263). Aside from these historical points, which never really count against a current practice that has gained a life of its own, this usage does have a further disadvantage of cutting or confounding the lines of communication between algebra and logic. For this reason, it will be avoided here.

A Functional Conception of Propositional Calculus

|

Out of the dimness opposite equals advance . . . . | |

| — Walt Whitman, Leaves of Grass, [Whi, 28] |

In the general case, we start with a set of logical features \(\{a_1, \ldots, a_n\}\) that represent properties of objects or propositions about the world. In concrete examples the features \(\{a_i\}\) commonly appear as capital letters from an alphabet like \(\{A, B, C, \ldots\}\) or as meaningful words from a linguistic vocabulary of codes. This language can be drawn from any sources, whether natural, technical, or artificial in character and interpretation. In the application to dynamic systems we tend to use the letters \(\{x_1, \ldots, x_n\}\) as our coordinate propositions, and to interpret them as denoting properties of a system's state, that is, as propositions about its location in configuration space. Because I have to consider non-deterministic systems from the outset, I often use the word state in a loose sense, to denote the position or configuration component of a contemplated state vector, whether or not it ever gets a deterministic completion.

The set of logical features \(\{a_1, \ldots, a_n\}\) provides a basis for generating an \(n\)-dimensional universe of discourse that I denote as \([a_1, \ldots, a_n].\) It is useful to consider each universe of discourse as a unified categorical object that incorporates both the set of points \(\langle a_1, \ldots, a_n \rangle\) and the set of propositions \(f : \langle a_1, \ldots, a_n \rangle \to \mathbb{B}\) that are implicit with the ordinary picture of a venn diagram on \(n\) features. Thus, we may regard the universe of discourse \([a_1, \ldots, a_n]\) as an ordered pair having the type \((\mathbb{B}^n, (\mathbb{B}^n \to \mathbb{B}),\) and we may abbreviate this last type designation as \(\mathbb{B}^n\ +\!\to \mathbb{B},\) or even more succinctly as \([\mathbb{B}^n].\) (Used this way, the angle brackets \(\langle\ldots\rangle\) are referred to as generator brackets.)

Table 2 exhibits the scheme of notation I use to formalize the domain of propositional calculus, corresponding to the logical content of truth tables and venn diagrams. Although it overworks the square brackets a bit, I also use either one of the equivalent notations \([n]\) or \(\mathbf{n}\) to denote the data type of a finite set on \(n\) elements.

| \(\text{Symbol}\) | \(\text{Notation}\) | \(\text{Description}\) | \(\text{Type}\) |

| \(\mathfrak{A}\) | \(\{ {}^{\backprime\backprime} a_1 {}^{\prime\prime}, \ldots, {}^{\backprime\backprime} a_n {}^{\prime\prime} \}\) | \(\text{Alphabet}\) | \([n] = \mathbf{n}\) |

| \(\mathcal{A}\) | \(\{ a_1, \ldots, a_n \}\) | \(\text{Basis}\) | \([n] = \mathbf{n}\) |

| \(A_i\) | \(\{ \texttt{(} a_i \texttt{)}, a_i \}\) | \(\text{Dimension}~ i\) | \(\mathbb{B}\) |

| \(A\) |

\(\begin{matrix} \langle \mathcal{A} \rangle \\[2pt] \langle a_1, \ldots, a_n \rangle \\[2pt] \{ (a_1, \ldots, a_n) \} \\[2pt] A_1 \times \ldots \times A_n \\[2pt] \textstyle \prod_{i=1}^n A_i \end{matrix}\) |

\(\begin{matrix} \text{Set of cells}, \\[2pt] \text{coordinate tuples}, \\[2pt] \text{points, or vectors} \\[2pt] \text{in the universe} \\[2pt] \text{of discourse} \end{matrix}\) |

\(\mathbb{B}^n\) |

| \(A^*\) | \((\mathrm{hom} : A \to \mathbb{B})\) | \(\text{Linear functions}\) | \((\mathbb{B}^n)^* \cong \mathbb{B}^n\) |

| \(A^\uparrow\) | \((A \to \mathbb{B})\) | \(\text{Boolean functions}\) | \(\mathbb{B}^n \to \mathbb{B}\) |

| \(A^\bullet\) |

\(\begin{matrix} [\mathcal{A}] \\[2pt] (A, A^\uparrow) \\[2pt] (A ~+\!\to \mathbb{B}) \\[2pt] (A, (A \to \mathbb{B})) \\[2pt] [a_1, \ldots, a_n] \end{matrix}\) |

\(\begin{matrix} \text{Universe of discourse} \\[2pt] \text{based on the features} \\[2pt] \{ a_1, \ldots, a_n \} \end{matrix}\) |

\(\begin{matrix} (\mathbb{B}^n, (\mathbb{B}^n \to \mathbb{B})) \\[2pt] (\mathbb{B}^n ~+\!\to \mathbb{B}) \\[2pt] [\mathbb{B}^n] \end{matrix}\) |

Qualitative Logic and Quantitative Analogy

|

Logical, however, is used in a third sense, which is at once more vital and more practical; to denote, namely, the systematic care, negative and positive, taken to safeguard reflection so that it may yield the best results under the given conditions. |

||

| — John Dewey, How We Think, [Dew, 56] | ||

These concepts and notations may now be explained in greater detail. In order to begin as simply as possible, let us distinguish two levels of analysis and set out initially on the easier path. On the first level of analysis we take spaces like \(\mathbb{B},\) \(\mathbb{B}^n,\) and \((\mathbb{B}^n \to \mathbb{B})\) at face value and treat them as the primary objects of interest. On the second level of analysis we use these spaces as coordinate charts for talking about points and functions in more fundamental spaces.

A pair of spaces, of types \(\mathbb{B}^n\) and \((\mathbb{B}^n \to \mathbb{B}),\) give typical expression to everything we commonly associate with the ordinary picture of a venn diagram. The dimension, \(n,\) counts the number of “circles” or simple closed curves that are inscribed in the universe of discourse, corresponding to its relevant logical features or basic propositions. Elements of type \(\mathbb{B}^n\) correspond to what are often called propositional interpretations in logic, that is, the different assignments of truth values to sentence letters. Relative to a given universe of discourse, these interpretations are visualized as its cells, in other words, the smallest enclosed areas or undivided regions of the venn diagram. The functions \(f : \mathbb{B}^n \to \mathbb{B}\) correspond to the different ways of shading the venn diagram to indicate arbitrary propositions, regions, or sets. Regions included under a shading indicate the models, and regions excluded represent the non-models of a proposition. To recognize and formalize the natural cohesion of these two layers of concepts into a single universe of discourse, we introduce the type notations \([\mathbb{B}^n] = \mathbb{B}^n\ +\!\to \mathbb{B}\) to stand for the pair of types \((\mathbb{B}^n, (\mathbb{B}^n \to \mathbb{B})).\) The resulting “stereotype” serves to frame the universe of discourse as a unified categorical object, and makes it subject to prescribed sets of evaluations and transformations (categorical morphisms or arrows) that affect the universe of discourse as an integrated whole.

Most of the time we can serve the algebraic, geometric, and logical interests of our study without worrying about their occasional conflicts and incidental divergences. The conventions and definitions already set down will continue to cover most of the algebraic and functional aspects of our discussion, but to handle the logical and qualitative aspects we will need to add a few more. In general, abstract sets may be denoted by gothic, greek, or script capital variants of \(A, B, C,\) and so on, with their elements being denoted by a corresponding set of subscripted letters in plain lower case, for example, \(\mathcal{A} = \{a_i\}.\) Most of the time, a set such as \(\mathcal{A} = \{a_i\}\) will be employed as the alphabet of a formal language. These alphabet letters serve to name the logical features (properties or propositions) that generate a particular universe of discourse. When we want to discuss the particular features of a universe of discourse, beyond the abstract designation of a type like \((\mathbb{B}^n\ +\!\to \mathbb{B}),\) then we may use the following notations. If \(\mathcal{A} = \{a_1, \ldots, a_n\}\) is an alphabet of logical features, then \(A = \langle \mathcal{A} \rangle = \langle a_1, \ldots, a_n \rangle\) is the set of interpretations, \(A^\uparrow = (A \to \mathbb{B})\) is the set of propositions, and \(A^\bullet = [\mathcal{A}] = [a_1, \ldots, a_n]\) is the combination of these interpretations and propositions into the universe of discourse that is based on the features \(\{a_1, \ldots, a_n\}.\)

As always, especially in concrete examples, these rules may be dropped whenever necessary, reverting to a free assortment of feature labels. However, when we need to talk about the logical aspects of a space that is already named as a vector space, it will be necessary to make special provisions. At any rate, these elaborations can be deferred until actually needed.

Philosophy of Notation : Formal Terms and Flexible Types

|

Where number is irrelevant, regimented mathematical technique has hitherto tended to be lacking. Thus it is that the progress of natural science has depended so largely upon the discernment of measurable quantity of one sort or another. |

||

| — W.V. Quine, Mathematical Logic, [Qui, 7] | ||

For much of our discussion propositions and boolean functions are treated as the same formal objects, or as different interpretations of the same formal calculus. This rule of interpretation has exceptions, though. There is a distinctively logical interest in the use of propositional calculus that is not exhausted by its functional interpretation. It is part of our task in this study to deal with these uniquely logical characteristics as they present themselves both in our subject matter and in our formal calculus. Just to provide a hint of what's at stake: In logic, as opposed to the more imaginative realms of mathematics, we consider it a good thing to always know what we are talking about. Where mathematics encourages tolerance for uninterpreted symbols as intermediate terms, logic exerts a keener effort to interpret directly each oblique carrier of meaning, no matter how slight, and to unfold the complicities of every indirection in the flow of information. Translated into functional terms, this means that we want to maintain a continual, immediate, and persistent sense of both the converse relation \(f^{-1} \subseteq \mathbb{B} \times \mathbb{B}^n,\) or what is the same thing, \(f^{-1} : \mathbb{B} \to \mathcal{P}(\mathbb{B}^n),\) and the fibers or inverse images \(f^{-1}(0)\) and \(f^{-1}(1),\) associated with each boolean function \(f : \mathbb{B}^n \to \mathbb{B}\) that we use. In practical terms, the desired implementation of a propositional interpreter should incorporate our intuitive recognition that the induced partition of the functional domain into level sets \(f^{-1}(b),\) for \(b \in \mathbb{B},\) is part and parcel of understanding the denotative uses of each propositional function \(f.\)

Special Classes of Propositions

It is important to remember that the coordinate propositions \(\{a_i\},\) besides being projection maps \(a_i : \mathbb{B}^n \to \mathbb{B},\) are propositions on an equal footing with all others, even though employed as a basis in a particular moment. This set of \(n\) propositions may sometimes be referred to as the basic propositions, the coordinate propositions, or the simple propositions that found a universe of discourse. Either one of the equivalent notations, \(\{a_i : \mathbb{B}^n \to \mathbb{B}\}\) or \((\mathbb{B}^n \xrightarrow{i} \mathbb{B}),\) may be used to indicate the adoption of the propositions \(a_i\) as a basis for describing a universe of discourse.

Among the \(2^{2^n}\) propositions in \([a_1, \ldots, a_n]\) are several families of \(2^n\) propositions each that take on special forms with respect to the basis \(\{ a_1, \ldots, a_n \}.\) Three of these families are especially prominent in the present context, the linear, the positive, and the singular propositions. Each family is naturally parameterized by the coordinate \(n\)-tuples in \(\mathbb{B}^n\) and falls into \(n + 1\) ranks, with a binomial coefficient \(\tbinom{n}{k}\) giving the number of propositions that have rank or weight \(k.\)

-

The linear propositions, \(\{ \ell : \mathbb{B}^n \to \mathbb{B} \} = (\mathbb{B}^n \xrightarrow{\ell} \mathbb{B}),\) may be written as sums:

\(\sum_{i=1}^n e_i ~=~ e_1 + \ldots + e_n ~\text{where}~ \left\{\begin{matrix} e_i = a_i \\ \text{or} \\ e_i = 0 \end{matrix}\right\} ~\text{for}~ i = 1 ~\text{to}~ n.\)

-

The positive propositions, \(\{ p : \mathbb{B}^n \to \mathbb{B} \} = (\mathbb{B}^n \xrightarrow{p} \mathbb{B}),\) may be written as products:

\(\prod_{i=1}^n e_i ~=~ e_1 \cdot \ldots \cdot e_n ~\text{where}~ \left\{\begin{matrix} e_i = a_i \\ \text{or} \\ e_i = 1 \end{matrix}\right\} ~\text{for}~ i = 1 ~\text{to}~ n.\)

-

The singular propositions, \(\{ \mathbf{x} : \mathbb{B}^n \to \mathbb{B} \} = (\mathbb{B}^n \xrightarrow{s} \mathbb{B}),\) may be written as products:

\(\prod_{i=1}^n e_i ~=~ e_1 \cdot \ldots \cdot e_n ~\text{where}~ \left\{\begin{matrix} e_i = a_i \\ \text{or} \\ e_i = \texttt{(} a_i \texttt{)} \end{matrix}\right\} ~\text{for}~ i = 1 ~\text{to}~ n.\)

In each case the rank \(k\) ranges from \(0\) to \(n\) and counts the number of positive appearances of the coordinate propositions \(a_1, \ldots, a_n\) in the resulting expression. For example, for \({n = 3},\) the linear proposition of rank \(0\) is \(0,\) the positive proposition of rank \(0\) is \(1,\) and the singular proposition of rank \(0\) is \(\texttt{(} a_1 \texttt{)(} a_2 \texttt{)(} a_3\texttt{)}.\)

The basic propositions \(a_i : \mathbb{B}^n \to \mathbb{B}\) are both linear and positive. So these two kinds of propositions, the linear and the positive, may be viewed as two different ways of generalizing the class of basic propositions.

Linear propositions and positive propositions are generated by taking boolean sums and products, respectively, over selected subsets of basic propositions, so both families of propositions are parameterized by the powerset \(\mathcal{P}(\mathcal{I}),\) that is, the set of all subsets \(J\) of the basic index set \(\mathcal{I} = \{1, \ldots, n\}.\)

Let us define \(\mathcal{A}_J\) as the subset of \(\mathcal{A}\) that is given by \(\{a_i : i \in J\}.\) Then we may comprehend the action of the linear and the positive propositions in the following terms:

- The linear proposition \(\ell_J : \mathbb{B}^n \to \mathbb{B}\) evaluates each cell \(\mathbf{x}\) of \(\mathbb{B}^n\) by looking at the coefficients of \(\mathbf{x}\) with respect to the features that \(\ell_J\) "likes", namely those in \(\mathcal{A}_J,\) and then adds them up in \(\mathbb{B}.\) Thus, \(\ell_J(\mathbf{x})\) computes the parity of the number of features that \(\mathbf{x}\) has in \(\mathcal{A}_J,\) yielding one for odd and zero for even. Expressed in this idiom, \(\ell_J(\mathbf{x}) = 1\) says that \(\mathbf{x}\) seems odd (or oddly true) to \(\mathcal{A}_J,\) whereas \(\ell_J(\mathbf{x}) = 0\) says that \(\mathbf{x}\) seems even (or evenly true) to \(\mathcal{A}_J,\) so long as we recall that zero times is evenly often, too.

- The positive proposition \(p_J : \mathbb{B}^n \to \mathbb{B}\) evaluates each cell \(\mathbf{x}\) of \(\mathbb{B}^n\) by looking at the coefficients of \(\mathbf{x}\) with regard to the features that \(p_J\) "likes", namely those in \(\mathcal{A}_J,\) and then takes their product in \(\mathbb{B}.\) Thus, \(p_J(\mathbf{x})\) assesses the unanimity of the multitude of features that \(\mathbf{x}\) has in \(\mathcal{A}_J,\) yielding one for all and aught for else. In these consensual or contractual terms, \(p_J(\mathbf{x}) = 1\) means that \(\mathbf{x}\) is AOK or congruent with all of the conditions of \(\mathcal{A}_J,\) while \(p_J(\mathbf{x}) = 0\) means that \(\mathbf{x}\) defaults or dissents from some condition of \(\mathcal{A}_J.\)

Basis Relativity and Type Ambiguity

Finally, two things are important to keep in mind with regard to the simplicity, linearity, positivity, and singularity of propositions.

First, all of these properties are relative to a particular basis. For example, a singular proposition with respect to a basis \(\mathcal{A}\) will not remain singular if \(\mathcal{A}\) is extended by a number of new and independent features. Even if we stick to the original set of pairwise options \(\{a_i\} \cup \{ \texttt{(} a_i \texttt{)} \}\) to select a new basis, the sets of linear and positive propositions are determined by the choice of simple propositions, and this determination is tantamount to the conventional choice of a cell as origin.

Second, the singular propositions \(\mathbb{B}^n \xrightarrow{\mathbf{x}} \mathbb{B},\) picking out as they do a single cell or a coordinate tuple \(\mathbf{x}\) of \(\mathbb{B}^n,\) become the carriers or the vehicles of a certain type-ambiguity that vacillates between the dual forms \(\mathbb{B}^n\) and \((\mathbb{B}^n \xrightarrow{\mathbf{x}} \mathbb{B})\) and infects the whole hierarchy of types built on them. In other words, the terms that signify the interpretations \(\mathbf{x} : \mathbb{B}^n\) and the singular propositions \(\mathbf{x} : \mathbb{B}^n \xrightarrow{\mathbf{x}} \mathbb{B}\) are fully equivalent in information, and this means that every token of the type \(\mathbb{B}^n\) can be reinterpreted as an appearance of the subtype \(\mathbb{B}^n \xrightarrow{\mathbf{x}} \mathbb{B}.\) And vice versa, the two types can be exchanged with each other everywhere that they turn up. In practical terms, this allows the use of singular propositions as a way of denoting points, forming an alternative to coordinate tuples.

For example, relative to the universe of discourse \([a_1, a_2, a_3]\) the singular proposition \(a_1 a_2 a_3 : \mathbb{B}^3 \xrightarrow{s} \mathbb{B}\) could be explicitly retyped as \(a_1 a_2 a_3 : \mathbb{B}^3\) to indicate the point \((1, 1, 1)\) but in most cases the proper interpretation could be gathered from context. Both notations remain dependent on a particular basis, but the code that is generated under the singular option has the advantage in its self-commenting features, in other words, it constantly reminds us of its basis in the process of denoting points. When the time comes to put a multiplicity of different bases into play, and to search for objects and properties that remain invariant under the transformations between them, this infinitesimal potential advantage may well evolve into an overwhelming practical necessity.

The Analogy Between Real and Boolean Types

|

Measurement consists in correlating our subject matter with the series of real numbers; and such correlations are desirable because, once they are set up, all the well-worked theory of numerical mathematics lies ready at hand as a tool for our further reasoning. |

||

| — W.V. Quine, Mathematical Logic, [Qui, 7] | ||

There are two further reasons why it useful to spend time on a careful treatment of types, and they both have to do with our being able to take full computational advantage of certain dimensions of flexibility in the types that apply to terms. First, the domains of differential geometry and logic programming are connected by analogies between real and boolean types of the same pattern. Second, the types involved in these patterns have important isomorphisms connecting them that apply on both the real and the boolean sides of the picture.

Amazingly enough, these isomorphisms are themselves schematized by the axioms and theorems of propositional logic. This fact is known as the propositions as types analogy or the Curry–Howard isomorphism [How]. In another formulation it says that terms are to types as proofs are to propositions. See [LaS, 42–46] and [SeH] for a good discussion and further references. To anticipate the bearing of these issues on our immediate topic, Table 3 sketches a partial overview of the Real to Boolean analogy that may serve to illustrate the paradigm in question.

| \(\text{Real Domain} ~ \mathbb{R}\) | \(\longleftrightarrow\) | \(\text{Boolean Domain} ~ \mathbb{B}\) |

| \(\mathbb{R}^n\) | \(\text{Basic Space}\) | \(\mathbb{B}^n\) |

| \(\mathbb{R}^n \to \mathbb{R}\) | \(\text{Function Space}\) | \(\mathbb{B}^n \to \mathbb{B}\) |

| \((\mathbb{R}^n \to \mathbb{R}) \to \mathbb{R}\) | \(\text{Tangent Vector}\) | \((\mathbb{B}^n \to \mathbb{B}) \to \mathbb{B}\) |

| \(\mathbb{R}^n \to ((\mathbb{R}^n \to \mathbb{R}) \to \mathbb{R})\) | \(\text{Vector Field}\) | \(\mathbb{B}^n \to ((\mathbb{B}^n \to \mathbb{B}) \to \mathbb{B})\) |

| \((\mathbb{R}^n \times (\mathbb{R}^n \to \mathbb{R})) \to \mathbb{R}\) | " | \((\mathbb{B}^n \times (\mathbb{B}^n \to \mathbb{B})) \to \mathbb{B}\) |

| \(((\mathbb{R}^n \to \mathbb{R}) \times \mathbb{R}^n) \to \mathbb{R}\) | " | \(((\mathbb{B}^n \to \mathbb{B}) \times \mathbb{B}^n) \to \mathbb{B}\) |

| \((\mathbb{R}^n \to \mathbb{R}) \to (\mathbb{R}^n \to \mathbb{R})\) | \(\text{Derivation}\) | \((\mathbb{B}^n \to \mathbb{B}) \to (\mathbb{B}^n \to \mathbb{B})\) |

| \(\mathbb{R}^n \to \mathbb{R}^m\) | \(\begin{matrix}\text{Basic}\\[2pt]\text{Transformation}\end{matrix}\) | \(\mathbb{B}^n \to \mathbb{B}^m\) |

| \((\mathbb{R}^n \to \mathbb{R}) \to (\mathbb{R}^m \to \mathbb{R})\) | \(\begin{matrix}\text{Function}\\[2pt]\text{Transformation}\end{matrix}\) | \((\mathbb{B}^n \to \mathbb{B}) \to (\mathbb{B}^m \to \mathbb{B})\) |

The Table exhibits a sample of likely parallels between the real and boolean domains. The central column gives a selection of terminology that is borrowed from differential geometry and extended in its meaning to the logical side of the Table. These are the varieties of spaces that come up when we turn to analyzing the dynamics of processes that pursue their courses through the states of an arbitrary space \(X.\) Moreover, when it becomes necessary to approach situations of overwhelming dynamic complexity in a succession of qualitative reaches, then the methods of logic that are afforded by the boolean domains, with their declarative means of synthesis and deductive modes of analysis, supply a natural battery of tools for the task.

It is usually expedient to take these spaces two at a time, in dual pairs of the form \(X\) and \((X \to \mathbb{K}).\) In general, one creates pairs of type schemas by replacing any space \(X\) with its dual \((X \to \mathbb{K}),\) for example, pairing the type \(X \to Y\) with the type \((X \to \mathbb{K}) \to (Y \to \mathbb{K}),\) and \(X \times Y\) with \((X \to \mathbb{K}) \times (Y \to \mathbb{K}).\) The word dual is used here in its broader sense to mean all of the functionals, not just the linear ones. Given any function \(f : X \to \mathbb{K},\) the converse or inverse relation corresponding to \(f\) is denoted \(f^{-1},\) and the subsets of \(X\) that are defined by \(f^{-1}(k),\) taken over \(k\) in \(\mathbb{K},\) are called the fibers or the level sets of the function \(f.\)

Theory of Control and Control of Theory

|

You will hardly know who I am or what I mean, | |

| — Walt Whitman, Leaves of Grass, [Whi, 88] |

In the boolean context a function \(f : X \to \mathbb{B}\) is tantamount to a proposition about elements of \(X,\) and the elements of \(X\) constitute the interpretations of that proposition. The fiber \(f^{-1}(1)\) comprises the set of models of \(f,\) or examples of elements in \(X\) satisfying the proposition \(f.\) The fiber \(f^{-1}(0)\) collects the complementary set of anti-models, or the exceptions to the proposition \(f\) that exist in \(X.\) Of course, the space of functions \((X \to \mathbb{B})\) is isomorphic to the set of all subsets of \(X,\) called the power set of \(X,\) and often denoted \(\mathcal{P}(X)\) or \(2^X.\)

The operation of replacing \(X\) by \((X \to \mathbb{B})\) in a type schema corresponds to a certain shift of attitude towards the space \(X,\) in which one passes from a focus on the ostensibly individual elements of \(X\) to a concern with the states of information and uncertainty that one possesses about objects and situations in \(X.\) The conceptual obstacles in the path of this transition can be smoothed over by using singular functions \((\mathbb{B}^n \xrightarrow{\mathbf{x}} \mathbb{B})\) as stepping stones. First of all, it's an easy step from an element \(\mathbf{x}\) of type \(\mathbb{B}^n\) to the equivalent information of a singular proposition \(\mathbf{x} : X \xrightarrow{s} \mathbb{B}, \) and then only a small jump of generalization remains to reach the type of an arbitrary proposition \(f : X \to \mathbb{B},\) perhaps understood to indicate a relaxed constraint on the singularity of points or a neighborhood circumscribing the original \(\mathbf{x}.\) This is frequently a useful transformation, communicating between the objective and the intentional perspectives, in spite perhaps of the open objection that this distinction is transient in the mean time and ultimately superficial.

It is hoped that this measure of flexibility, allowing us to stretch a point into a proposition, can be useful in the examination of inquiry driven systems, where the differences between empirical, intentional, and theoretical propositions constitute the discrepancies and the distributions that drive experimental activity. I can give this model of inquiry a cybernetic cast by realizing that theory change and theory evolution, as well as the choice and the evaluation of experiments, are actions that are taken by a system or its agent in response to the differences that are detected between observational contents and theoretical coverage.

All of the above notwithstanding, there are several points that distinguish these two tasks, namely, the theory of control and the control of theory, features that are often obscured by too much precipitation in the quickness with which we understand their similarities. In the control of uncertainty through inquiry, some of the actuators that we need to be concerned with are axiom changers and theory modifiers, operators with the power to compile and to revise the theories that generate expectations and predictions, effectors that form and edit our grammars for the languages of observational data, and agencies that rework the proposed model to fit the actual sequences of events and the realized relationships of values that are observed in the environment. Moreover, when steps must be taken to carry out an experimental action, there must be something about the particular shape of our uncertainty that guides us in choosing what directions to explore, and this impression is more than likely influenced by previous accumulations of experience. Thus it must be anticipated that much of what goes into scientific progress, or any sustainable effort toward a goal of knowledge, is necessarily predicated on long term observation and modal expectations, not only on the more local or short term prediction and correction.

Propositions as Types and Higher Order Types

The types collected in Table 3 (repeated below) serve to illustrate the themes of higher order propositional expressions and the propositions as types (PAT) analogy.

| \(\text{Real Domain} ~ \mathbb{R}\) | \(\longleftrightarrow\) | \(\text{Boolean Domain} ~ \mathbb{B}\) |

| \(\mathbb{R}^n\) | \(\text{Basic Space}\) | \(\mathbb{B}^n\) |

| \(\mathbb{R}^n \to \mathbb{R}\) | \(\text{Function Space}\) | \(\mathbb{B}^n \to \mathbb{B}\) |

| \((\mathbb{R}^n \to \mathbb{R}) \to \mathbb{R}\) | \(\text{Tangent Vector}\) | \((\mathbb{B}^n \to \mathbb{B}) \to \mathbb{B}\) |

| \(\mathbb{R}^n \to ((\mathbb{R}^n \to \mathbb{R}) \to \mathbb{R})\) | \(\text{Vector Field}\) | \(\mathbb{B}^n \to ((\mathbb{B}^n \to \mathbb{B}) \to \mathbb{B})\) |

| \((\mathbb{R}^n \times (\mathbb{R}^n \to \mathbb{R})) \to \mathbb{R}\) | " | \((\mathbb{B}^n \times (\mathbb{B}^n \to \mathbb{B})) \to \mathbb{B}\) |

| \(((\mathbb{R}^n \to \mathbb{R}) \times \mathbb{R}^n) \to \mathbb{R}\) | " | \(((\mathbb{B}^n \to \mathbb{B}) \times \mathbb{B}^n) \to \mathbb{B}\) |

| \((\mathbb{R}^n \to \mathbb{R}) \to (\mathbb{R}^n \to \mathbb{R})\) | \(\text{Derivation}\) | \((\mathbb{B}^n \to \mathbb{B}) \to (\mathbb{B}^n \to \mathbb{B})\) |

| \(\mathbb{R}^n \to \mathbb{R}^m\) | \(\begin{matrix}\text{Basic}\\[2pt]\text{Transformation}\end{matrix}\) | \(\mathbb{B}^n \to \mathbb{B}^m\) |

| \((\mathbb{R}^n \to \mathbb{R}) \to (\mathbb{R}^m \to \mathbb{R})\) | \(\begin{matrix}\text{Function}\\[2pt]\text{Transformation}\end{matrix}\) | \((\mathbb{B}^n \to \mathbb{B}) \to (\mathbb{B}^m \to \mathbb{B})\) |

First, observe that the type of a tangent vector at a point, also known as a directional derivative at that point, has the form \((\mathbb{K}^n \to \mathbb{K}) \to \mathbb{K},\) where \(\mathbb{K}\) is the chosen ground field, in the present case either \(\mathbb{R}\) or \(\mathbb{B}.\) At a point in a space of type \(\mathbb{K}^n,\) a directional derivative operator \(\vartheta\) takes a function on that space, an \(f\) of type \((\mathbb{K}^n \to \mathbb{K}),\) and maps it to a ground field value of type \(\mathbb{K}.\) This value is known as the derivative of \(f\) in the direction \(\vartheta\) [Che46, 76–77]. In the boolean case \(\vartheta : (\mathbb{B}^n \to \mathbb{B}) \to \mathbb{B}\) has the form of a proposition about propositions, in other words, a proposition of the next higher type.

Next, by way of illustrating the propositions as types idea, consider a proposition of the form \(X \Rightarrow (Y \Rightarrow Z).\) One knows from propositional calculus that this is logically equivalent to a proposition of the form \((X \land Y) \Rightarrow Z.\) But this equivalence should remind us of the functional isomorphism that exists between a construction of the type \(X \to (Y \to Z)\) and a construction of the type \((X \times Y) \to Z.\) The propositions as types analogy permits us to take a functional type like this and, under the right conditions, replace the functional arrows “\(\to\)” and products “\(\times\)” with the respective logical arrows “\(\Rightarrow\)” and products “\(\land\)”. Accordingly, viewing the result as a proposition, we can employ axioms and theorems of propositional calculus to suggest appropriate isomorphisms among the categorical and functional constructions.

Finally, examine the middle four rows of Table 3. These display a series of isomorphic types that stretch from the categories that are labeled Vector Field to those that are labeled Derivation. A vector field, also known as an infinitesimal transformation, associates a tangent vector at a point with each point of a space. In symbols, a vector field is a function of the form \(\textstyle \xi : X \to \bigcup_{x \in X} \xi_x\) that assigns to each point \(x\) of the space \(X\) a tangent vector to \(X\) at that point, namely, the tangent vector \(\xi_x\) [Che46, 82–83]. If \(X\) is of the type \(\mathbb{K}^n,\) then \(\xi\) is of the type \(\mathbb{K}^n \to ((\mathbb{K}^n \to \mathbb{K}) \to \mathbb{K}).\) This has the pattern \(X \to (Y \to Z),\) with \(X = \mathbb{K}^n,\) \(Y = (\mathbb{K}^n \to \mathbb{K}),\) and \(Z = \mathbb{K}.\)

Applying the propositions as types analogy, one can follow this pattern through a series of metamorphoses from the type of a vector field to the type of a derivation, as traced out in Table 4. Observe how the function \(f : X \to \mathbb{K},\) associated with the place of \(Y\) in the pattern, moves through its paces from the second to the first position. In this way, the vector field \(\xi,\) initially viewed as attaching each tangent vector \(\xi_x\) to the site \(x\) where it acts in \(X,\) now comes to be seen as acting on each scalar potential \(f : X \to \mathbb{K}\) like a generalized species of differentiation, producing another function \(\xi f : X \to \mathbb{K}\) of the same type.

| \(\text{Pattern}\) | \(\text{Construct}\) | \(\text{Instance}\) |

| \(X \to (Y \to Z)\) | \(\text{Vector Field}\) | \(\mathbb{K}^n \to ((\mathbb{K}^n \to \mathbb{K}) \to \mathbb{K})\) |

| \((X \times Y) \to Z\) | \(\Uparrow\) | \((\mathbb{K}^n \times (\mathbb{K}^n \to \mathbb{K})) \to \mathbb{K}\) |

| \((Y \times X) \to Z\) | \(\Downarrow\) | \(((\mathbb{K}^n \to \mathbb{K}) \times \mathbb{K}^n) \to \mathbb{K}\) |

| \(Y \to (X \to Z)\) | \(\text{Derivation}\) | \((\mathbb{K}^n \to \mathbb{K}) \to (\mathbb{K}^n \to \mathbb{K})\) |

Reality at the Threshold of Logic

|

But no science can rest entirely on measurement, and many scientific investigations are quite out of reach of that device. To the scientist longing for non-quantitative techniques, then, mathematical logic brings hope. |

||

| — W.V. Quine, Mathematical Logic, [Qui, 7] | ||

Table 5 accumulates an array of notation that I hope will not be too distracting. Some of it is rarely needed, but has been filled in for the sake of completeness. Its purpose is simple, to give literal expression to the visual intuitions that come with venn diagrams, and to help build a bridge between our qualitative and quantitative outlooks on dynamic systems.

| \(\text{Linear Space}\) | \(\text{Liminal Space}\) | \(\text{Logical Space}\) |

| \(\begin{matrix}\mathcal{X} & = & \{ x_1, \ldots, x_n \}\end{matrix}\) | \(\begin{matrix}\underline{\mathcal{X}} & = & \{ \underline{x}_1, \ldots, \underline{x}_n \}\end{matrix}\) | \(\begin{matrix}\mathcal{A} & = & \{ a_1, \ldots, a_n \}\end{matrix}\) |

|

\(\begin{matrix} X_i & = & \langle x_i \rangle \\ & \cong & \mathbb{K} \end{matrix}\) |

\(\begin{matrix} \underline{X}_i & = & \{ \texttt{(} \underline{x}_i \texttt{)}, \underline{x}_i \} \\ & \cong & \mathbb{B} \end{matrix}\) |

\(\begin{matrix} A_i & = & \{ \texttt{(} a_i \texttt{)}, a_i \} \\ & \cong & \mathbb{B} \end{matrix}\) |

|

\(\begin{matrix} X \\ = & \langle \mathcal{X} \rangle \\ = & \langle x_1, \ldots, x_n \rangle \\ = & X_1 \times \ldots \times X_n \\ = & \prod_{i=1}^n X_i \\ \cong & \mathbb{K}^n \end{matrix}\) |

\(\begin{matrix} \underline{X} \\ = & \langle \underline{\mathcal{X}} \rangle \\ = & \langle \underline{x}_1, \ldots, \underline{x}_n \rangle \\ = & \underline{X}_1 \times \ldots \times \underline{X}_n \\ = & \prod_{i=1}^n \underline{X}_i \\ \cong & \mathbb{B}^n \end{matrix}\) |

\(\begin{matrix} A \\ = & \langle \mathcal{A} \rangle \\ = & \langle a_1, \ldots, a_n \rangle \\ = & A_1 \times \ldots \times A_n \\ = & \prod_{i=1}^n A_i \\ \cong & \mathbb{B}^n \end{matrix}\) |

|

\(\begin{matrix} X^* & = & (\ell : X \to \mathbb{K}) \\ & \cong & \mathbb{K}^n \end{matrix}\) |

\(\begin{matrix} \underline{X}^* & = & (\ell : \underline{X} \to \mathbb{B}) \\ & \cong & \mathbb{B}^n \end{matrix}\) |

\(\begin{matrix} A^* & = & (\ell : A \to \mathbb{B}) \\ & \cong & \mathbb{B}^n \end{matrix}\) |

|

\(\begin{matrix} X^\uparrow & = & (X \to \mathbb{K}) \\ & \cong & (\mathbb{K}^n \to \mathbb{K}) \end{matrix}\) |

\(\begin{matrix} \underline{X}^\uparrow & = & (\underline{X} \to \mathbb{B}) \\ & \cong & (\mathbb{B}^n \to \mathbb{B}) \end{matrix}\) |

\(\begin{matrix} A^\uparrow & = & (A \to \mathbb{B}) \\ & \cong & (\mathbb{B}^n \to \mathbb{B}) \end{matrix}\) |

|

\(\begin{matrix} X^\bullet \\ = & [\mathcal{X}] \\ = & [x_1, \ldots, x_n] \\ = & (X, X^\uparrow) \\ = & (X ~+\!\to \mathbb{K}) \\ = & (X, (X \to \mathbb{K})) \\ \cong & (\mathbb{K}^n, (\mathbb{K}^n \to \mathbb{K})) \\ = & (\mathbb{K}^n ~+\!\to \mathbb{K}) \\ = & [\mathbb{K}^n] \end{matrix}\) |

\(\begin{matrix} \underline{X}^\bullet \\ = & [\underline{\mathcal{X}}] \\ = & [\underline{x}_1, \ldots, \underline{x}_n] \\ = & (\underline{X}, \underline{X}^\uparrow) \\ = & (\underline{X} ~+\!\to \mathbb{B}) \\ = & (\underline{X}, (\underline{X} \to \mathbb{B})) \\ \cong & (\mathbb{B}^n, (\mathbb{B}^n \to \mathbb{B})) \\ = & (\mathbb{B}^n ~+\!\to \mathbb{B}) \\ = & [\mathbb{B}^n] \end{matrix}\) |

\(\begin{matrix} A^\bullet \\ = & [\mathcal{A}] \\ = & [a_1, \ldots, a_n] \\ = & (A, A^\uparrow) \\ = & (A ~+\!\to \mathbb{B}) \\ = & (A, (A \to \mathbb{B})) \\ \cong & (\mathbb{B}^n, (\mathbb{B}^n \to \mathbb{B})) \\ = & (\mathbb{B}^n ~+\!\to \mathbb{B}) \\ = & [\mathbb{B}^n] \end{matrix}\) |

The left side of the Table collects mostly standard notation for an \(n\)-dimensional vector space over a field \(\mathbb{K}.\) The right side of the table repeats the first elements of a notation that I sketched above, to be used in further developments of propositional calculus. (I plan to use this notation in the logical analysis of neural network systems.) The middle column of the table is designed as a transitional step from the case of an arbitrary field \(\mathbb{K},\) with a special interest in the continuous line \(\mathbb{R},\) to the qualitative and discrete situations that are instanced and typified by \(\mathbb{B}.\)

I now proceed to explain these concepts in more detail. The most important ideas developed in Table 5 are these:

- The idea of a universe of discourse, which includes both a space of points and a space of maps on those points.

- The idea of passing from a more complex universe to a simpler universe by a process of thresholding each dimension of variation down to a single bit of information.

For the sake of concreteness, let us suppose that we start with a continuous \(n\)-dimensional vector space like \(X = \langle x_1, \ldots, x_n \rangle \cong \mathbb{R}^n.\) The coordinate system \(\mathcal{X} = \{x_i\}\) is a set of maps \(x_i : \mathbb{R}^n \to \mathbb{R},\) also known as the coordinate projections. Given a "dataset" of points \(\mathbf{x}\) in \(\mathbb{R}^n,\) there are numerous ways of sensibly reducing the data down to one bit for each dimension. One strategy that is general enough for our present purposes is as follows. For each \(i\) we choose an \(n\)-ary relation \(L_i\) on \(\mathbb{R}^n,\) that is, a subset of \(\mathbb{R}^n,\) and then we define the \(i^\mathrm{th}\) threshold map, or limen \(\underline{x}_i\) as follows:

| \(\underline{x}_i : \mathbb{R}^n \to \mathbb{B}\ \text{such that:}\) |

|

\(\begin{matrix} \underline{x}_i(\mathbf{x}) = 1 & \text{if} & \mathbf{x} \in L_i, \\[4pt] \underline{x}_i(\mathbf{x}) = 0 & \text{if} & \mathbf{x} \not\in L_i. \end{matrix}\) |

In other notations that are sometimes used, the operator \(\chi (\ldots)\) or the corner brackets \(\lceil\ldots\rceil\) can be used to denote a characteristic function, that is, a mapping from statements to their truth values in \(\mathbb{B}.\) Finally, it is not uncommon to use the name of the relation itself as a predicate that maps \(n\)-tuples into truth values. Thus we have the following notational variants of the above definition:

|

\(\begin{matrix} \underline{x}_i (\mathbf{x}) & = & \chi (\mathbf{x} \in L_i) & = & \lceil \mathbf{x} \in L_i \rceil & = & L_i (\mathbf{x}). \end{matrix}\) |

Notice that, as defined here, there need be no actual relation between the \(n\)-dimensional subsets \(\{L_i\}\) and the coordinate axes corresponding to \(\{x_i\},\) aside from the circumstance that the two sets have the same cardinality. In concrete cases, though, one usually has some reason for associating these "volumes" with these "lines", for instance, \(L_i\) is bounded by some hyperplane that intersects the \(i^\text{th}\) axis at a unique threshold value \(r_i \in \mathbb{R}.\) Often, the hyperplane is chosen normal to the axis. In recognition of this motive, let us make the following convention. When the set \(L_i\) has points on the \(i^\text{th}\) axis, that is, points of the form \((0, \ldots, 0, r_i, 0, \ldots, 0)\) where only the \(x_i\) coordinate is possibly non-zero, we may pick any one of these coordinate values as a parametric index of the relation. In this case we say that the indexing is real, otherwise the indexing is imaginary. For a knowledge based system \(X,\) this should serve once again to mark the distinction between acquaintance and opinion.

States of knowledge about the location of a system or about the distribution of a population of systems in a state space \(X = \mathbb{R}^n\) can now be expressed by taking the set \(\underline{\mathcal{X}} = \{\underline{x}_i\}\) as a basis of logical features. In picturesque terms, one may think of the underscore and the subscript as combining to form a subtextual spelling for the \(i^\text{th}\) threshold map. This can help to remind us that the threshold operator \((\underline{~})_i\) acts on \(\mathbf{x}\) by setting up a kind of a “hurdle” for it. In this interpretation the coordinate proposition \(\underline{x}_i\) asserts that the representative point \(\mathbf{x}\) resides above the \(i^\mathrm{th}\) threshold.

Primitive assertions of the form \(\underline{x}_i (\mathbf{x})\) may then be negated and joined by means of propositional connectives in the usual ways to provide information about the state \(\mathbf{x}\) of a contemplated system or a statistical ensemble of systems. Parentheses \(\texttt{(} \ldots \texttt{)}\) may be used to indicate logical negation. Eventually one discovers the usefulness of the \(k\)-ary just one false operators of the form \(\texttt{(} a_1 \texttt{,} \ldots \texttt{,} a_k \texttt{)},\) as treated in earlier reports. This much tackle generates a space of points (cells, interpretations), \(\underline{X} \cong \mathbb{B}^n,\) and a space of functions (regions, propositions), \(\underline{X}^\uparrow \cong (\mathbb{B}^n \to \mathbb{B}).\) Together these form a new universe of discourse \(\underline{X}^\bullet\) of the type \((\mathbb{B}^n, (\mathbb{B}^n \to \mathbb{B})),\) which we may abbreviate as \(\mathbb{B}^n\ +\!\to \mathbb{B}\) or most succinctly as \([\mathbb{B}^n].\)

The square brackets have been chosen to recall the rectangular frame of a venn diagram. In thinking about a universe of discourse it is a good idea to keep this picture in mind, graphically illustrating the links among the elementary cells \(\underline{\mathbf{x}},\) the defining features \(\underline{x}_i,\) and the potential shadings \(f : \underline{X} \to \mathbb{B}\) all at the same time, not to mention the arbitrariness of the way we choose to inscribe our distinctions in the medium of a continuous space.

Finally, let \(X^*\) denote the space of linear functions, \((\ell : X \to \mathbb{K}),\) which has in the finite case the same dimensionality as \(X,\) and let the same notation be extended across the Table.

We have just gone through a lot of work, apparently doing nothing more substantial than spinning a complex spell of notational devices through a labyrinth of baffled spaces and baffling maps. The reason for doing this was to bind together and to constitute the intuitive concept of a universe of discourse into a coherent categorical object, the kind of thing, once grasped, that can be turned over in the mind and considered in all its manifold changes and facets. The effort invested in these preliminary measures is intended to pay off later, when we need to consider the state transformations and the time evolution of neural network systems.

Tables of Propositional Forms

|

To the scientist longing for non-quantitative techniques, then, mathematical logic brings hope. It provides explicit techniques for manipulating the most basic ingredients of discourse. |

||

| — W.V. Quine, Mathematical Logic, [Qui, 7–8] | ||

To prepare for the next phase of discussion, Tables 6 and 7 collect and summarize all of the propositional forms on one and two variables. These propositional forms are represented over bases of boolean variables as complete sets of boolean-valued functions. Adjacent to their names and specifications are listed what are roughly the simplest expressions in the cactus language, the particular syntax for propositional calculus that I use in formal and computational contexts. For the sake of orientation, the English paraphrases and the more common notations are listed in the last two columns. As simple and circumscribed as these low-dimensional universes may appear to be, a careful exploration of their differential extensions will involve us in complexities sufficient to demand our attention for some time to come.

Propositional forms on one variable correspond to boolean functions \(f : \mathbb{B}^1 \to \mathbb{B}.\) In Table 6 these functions are listed in a variant form of truth table, one in which the axes of the usual arrangement are rotated through a right angle. Each function \(f_i\) is indexed by the string of values that it takes on the points of the universe \(X^\bullet = [x] \cong \mathbb{B}^1.\) The binary index generated in this way is converted to its decimal equivalent and these are used as conventional names for the \(f_i,\) as shown in the first column of the Table. In their own right the \(2^1\) points of the universe \(X^\bullet\) are coordinated as a space of type \(\mathbb{B}^1,\) this in light of the universe \(X^\bullet\) being a functional domain where the coordinate projection \(x\) takes on its values in \(\mathbb{B}.\)

| \(\begin{matrix}\mathcal{L}_1 \\ \text{Decimal}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_2 \\ \text{Binary}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_3 \\ \text{Vector}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_4 \\ \text{Cactus}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_5 \\ \text{English}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_6 \\ \text{Ordinary}\end{matrix}\) |

| \(x\colon\) | \(1~0\) | ||||

| \(f_0\) | \(f_{00}\) | \(0~0\) | \(\texttt{(} ~ \texttt{)}\) | \(\text{false}\) | \(0\) |

| \(f_1\) | \(f_{01}\) | \(0~1\) | \(\texttt{(} x \texttt{)}\) | \(\text{not}~ x\) | \(\lnot x\) |

| \(f_2\) | \(f_{10}\) | \(1~0\) | \(x\) | \(x\) | \(x\) |

| \(f_3\) | \(f_{11}\) | \(1~1\) | \(\texttt{((} ~ \texttt{))}\) | \(\text{true}\) | \(1\) |

Propositional forms on two variables correspond to boolean functions \(f : \mathbb{B}^2 \to \mathbb{B}.\) In Table 7 each function \(f_i\) is indexed by the values that it takes on the points of the universe \(X^\bullet = [x, y] \cong \mathbb{B}^2.\) Converting the binary index thus generated to a decimal equivalent, we obtain the functional nicknames that are listed in the first column. The \(2^2\) points of the universe \(X^\bullet\) are coordinated as a space of type \(\mathbb{B}^2,\) as indicated under the heading of the Table, where the coordinate projections \(x\) and \(y\) run through the various combinations of their values in \(\mathbb{B}.\)

| \(\begin{matrix}\mathcal{L}_1 \\ \text{Decimal}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_2 \\ \text{Binary}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_3 \\ \text{Vector}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_4 \\ \text{Cactus}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_5 \\ \text{English}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_6 \\ \text{Ordinary}\end{matrix}\) |

| \(x\colon\) | \(1~1~0~0\) | ||||

| \(y\colon\) | \(1~0~1~0\) | ||||

|

\(\begin{matrix} f_{0} \\[4pt] f_{1} \\[4pt] f_{2} \\[4pt] f_{3} \\[4pt] f_{4} \\[4pt] f_{5} \\[4pt] f_{6} \\[4pt] f_{7} \end{matrix}\) |

\(\begin{matrix} f_{0000} \\[4pt] f_{0001} \\[4pt] f_{0010} \\[4pt] f_{0011} \\[4pt] f_{0100} \\[4pt] f_{0101} \\[4pt] f_{0110} \\[4pt] f_{0111} \end{matrix}\) |

\(\begin{matrix} 0~0~0~0 \\[4pt] 0~0~0~1 \\[4pt] 0~0~1~0 \\[4pt] 0~0~1~1 \\[4pt] 0~1~0~0 \\[4pt] 0~1~0~1 \\[4pt] 0~1~1~0 \\[4pt] 0~1~1~1 \end{matrix}\) |

\(\begin{matrix} \texttt{(} ~ \texttt{)} \\[4pt] \texttt{(} x \texttt{)(} y \texttt{)} \\[4pt] \texttt{(} x \texttt{)} ~ y ~ \\[4pt] \texttt{(} x \texttt{)} \\[4pt] ~ x ~ \texttt{(} y \texttt{)} \\[4pt] \texttt{(} y \texttt{)} \\[4pt] \texttt{(} x \texttt{,} ~ y \texttt{)} \\[4pt] \texttt{(} x ~ y \texttt{)} \end{matrix}\) |

\(\begin{matrix} \text{false} \\[4pt] \text{neither}~ x ~\text{nor}~ y \\[4pt] y ~\text{without}~ x \\[4pt] \text{not}~ x \\[4pt] x ~\text{without}~ y \\[4pt] \text{not}~ y \\[4pt] x ~\text{not equal to}~ y \\[4pt] \text{not both}~ x ~\text{and}~ y \end{matrix}\) |

\(\begin{matrix} 0 \\[4pt] \lnot x \land \lnot y \\[4pt] \lnot x \land y \\[4pt] \lnot x \\[4pt] x \land \lnot y \\[4pt] \lnot y \\[4pt] x \ne y \\[4pt] \lnot x \lor \lnot y \end{matrix}\) |

|

\(\begin{matrix} f_{8} \\[4pt] f_{9} \\[4pt] f_{10} \\[4pt] f_{11} \\[4pt] f_{12} \\[4pt] f_{13} \\[4pt] f_{14} \\[4pt] f_{15} \end{matrix}\) |

\(\begin{matrix} f_{1000} \\[4pt] f_{1001} \\[4pt] f_{1010} \\[4pt] f_{1011} \\[4pt] f_{1100} \\[4pt] f_{1101} \\[4pt] f_{1110} \\[4pt] f_{1111} \end{matrix}\) |

\(\begin{matrix} 1~0~0~0 \\[4pt] 1~0~0~1 \\[4pt] 1~0~1~0 \\[4pt] 1~0~1~1 \\[4pt] 1~1~0~0 \\[4pt] 1~1~0~1 \\[4pt] 1~1~1~0 \\[4pt] 1~1~1~1 \end{matrix}\) |

\(\begin{matrix} x ~ y \\[4pt] \texttt{((} x \texttt{,} ~ y \texttt{))} \\[4pt] y \\[4pt] \texttt{(} x ~ \texttt{(} y \texttt{))} \\[4pt] x \\[4pt] \texttt{((} x \texttt{)} ~ y \texttt{)} \\[4pt] \texttt{((} x \texttt{)(} y \texttt{))} \\[4pt] \texttt{((} ~ \texttt{))} \end{matrix}\) |

\(\begin{matrix} x ~\text{and}~ y \\[4pt] x ~\text{equal to}~ y \\[4pt] y \\[4pt] \text{not}~ x ~\text{without}~ y \\[4pt] x \\[4pt] \text{not}~ y ~\text{without}~ x \\[4pt] x ~\text{or}~ y \\[4pt] \text{true} \end{matrix}\) |

\(\begin{matrix} x \land y \\[4pt] x = y \\[4pt] y \\[4pt] x \Rightarrow y \\[4pt] x \\[4pt] x \Leftarrow y \\[4pt] x \lor y \\[4pt] 1 \end{matrix}\) |

| \(\begin{matrix}\mathcal{L}_1 \\ \text{Decimal}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_2 \\ \text{Binary}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_3 \\ \text{Vector}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_4 \\ \text{Cactus}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_5 \\ \text{English}\end{matrix}\) | \(\begin{matrix}\mathcal{L}_6 \\ \text{Ordinary}\end{matrix}\) |

| \(x\colon\) | \(1~1~0~0\) | ||||

| \(y\colon\) | \(1~0~1~0\) | ||||

| \(f_{0}\) | \(f_{0000}\) | \(0~0~0~0\) | \(\texttt{(} ~ \texttt{)}\) | \(\text{false}\) | \(0\) |

|

\(\begin{matrix} f_{1} \\[4pt] f_{2} \\[4pt] f_{4} \\[4pt] f_{8} \end{matrix}\) |

\(\begin{matrix} f_{0001} \\[4pt] f_{0010} \\[4pt] f_{0100} \\[4pt] f_{1000} \end{matrix}\) |

\(\begin{matrix} 0~0~0~1 \\[4pt] 0~0~1~0 \\[4pt] 0~1~0~0 \\[4pt] 1~0~0~0 \end{matrix}\) |

\(\begin{matrix} \texttt{(} x \texttt{)(} y \texttt{)} \\[4pt] \texttt{(} x \texttt{)} ~ y ~ \\[4pt] ~ x ~ \texttt{(} y \texttt{)} \\[4pt] ~ x ~~ y ~ \end{matrix}\) |

\(\begin{matrix} \text{neither}~ x ~\text{nor}~ y \\[4pt] y ~\text{without}~ x \\[4pt] x ~\text{without}~ y \\[4pt] x ~\text{and}~ y \end{matrix}\) |

\(\begin{matrix} \lnot x \land \lnot y \\[4pt] \lnot x \land y \\[4pt] x \land \lnot y \\[4pt] x \land y \end{matrix}\) |

|

\(\begin{matrix} f_{3} \\[4pt] f_{12} \end{matrix}\) |

\(\begin{matrix} f_{0011} \\[4pt] f_{1100} \end{matrix}\) |

\(\begin{matrix} 0~0~1~1 \\[4pt] 1~1~0~0 \end{matrix}\) |

\(\begin{matrix} \texttt{(} x \texttt{)} \\[4pt] x \end{matrix}\) |

\(\begin{matrix} \text{not}~ x \\[4pt] x \end{matrix}\) |

\(\begin{matrix} \lnot x \\[4pt] x \end{matrix}\) |

|

\(\begin{matrix} f_{6} \\[4pt] f_{9} \end{matrix}\) |

\(\begin{matrix} f_{0110} \\[4pt] f_{1001} \end{matrix}\) |

\(\begin{matrix} 0~1~1~0 \\[4pt] 1~0~0~1 \end{matrix}\) |

\(\begin{matrix} \texttt{(} x \texttt{,} y \texttt{)} \\[4pt] \texttt{((} x \texttt{,} y \texttt{))} \end{matrix}\) |

\(\begin{matrix} x ~\text{not equal to}~ y \\[4pt] x ~\text{equal to}~ y \end{matrix}\) |

\(\begin{matrix} x \ne y \\[4pt] x = y \end{matrix}\) |

|

\(\begin{matrix} f_{5} \\[4pt] f_{10} \end{matrix}\) |

\(\begin{matrix} f_{0101} \\[4pt] f_{1010} \end{matrix}\) |

\(\begin{matrix} 0~1~0~1 \\[4pt] 1~0~1~0 \end{matrix}\) |

\(\begin{matrix} \texttt{(} y \texttt{)} \\[4pt] y \end{matrix}\) |

\(\begin{matrix} \text{not}~ y \\[4pt] y \end{matrix}\) |

\(\begin{matrix} \lnot y \\[4pt] y \end{matrix}\) |

|

\(\begin{matrix} f_{7} \\[4pt] f_{11} \\[4pt] f_{13} \\[4pt] f_{14} \end{matrix}\) |

\(\begin{matrix} f_{0111} \\[4pt] f_{1011} \\[4pt] f_{1101} \\[4pt] f_{1110} \end{matrix}\) |

\(\begin{matrix} 0~1~1~1 \\[4pt] 1~0~1~1 \\[4pt] 1~1~0~1 \\[4pt] 1~1~1~0 \end{matrix}\) |

\(\begin{matrix} \texttt{(} ~ x ~~ y ~ \texttt{)} \\[4pt] \texttt{(} ~ x ~ \texttt{(} y \texttt{))} \\[4pt] \texttt{((} x \texttt{)} ~ y ~ \texttt{)} \\[4pt] \texttt{((} x \texttt{)(} y \texttt{))} \end{matrix}\) |

\(\begin{matrix} \text{not both}~ x ~\text{and}~ y \\[4pt] \text{not}~ x ~\text{without}~ y \\[4pt] \text{not}~ y ~\text{without}~ x \\[4pt] x ~\text{or}~ y \end{matrix}\) |

\(\begin{matrix} \lnot x \lor \lnot y \\[4pt] x \Rightarrow y \\[4pt] x \Leftarrow y \\[4pt] x \lor y \end{matrix}\) |

| \(f_{15}\) | \(f_{1111}\) | \(1~1~1~1\) | \(\texttt{((} ~ \texttt{))}\) | \(\text{true}\) | \(1\) |

A Differential Extension of Propositional Calculus

|

Fire over water: | |

| — I Ching, Hexagram 64, [Wil, 249] |

This much preparation is enough to begin introducing my subject, if I excuse myself from giving full arguments for my definitional choices until some later stage. I am trying to develop a differential theory of qualitative equations that parallels the application of differential geometry to dynamic systems. The idea of a tangent vector is key to this work and a major goal is to find the right logical analogues of tangent spaces, bundles, and functors. The strategy is taken of looking for the simplest versions of these constructions that can be discovered within the realm of propositional calculus, so long as they serve to fill out the general theme.

Differential Propositions : Qualitative Analogues of Differential Equations

In order to define the differential extension of a universe of discourse \([\mathcal{A}],\) the initial alphabet \(\mathcal{A}\) must be extended to include a collection of symbols for differential features, or basic changes that are capable of occurring in \([\mathcal{A}].\) Intuitively, these symbols may be construed as denoting primitive features of change, qualitative attributes of motion, or propositions about how things or points in the universe may be changing or moving with respect to the features that are noted in the initial alphabet.

Therefore, let us define the corresponding differential alphabet or tangent alphabet as \(\mathrm{d}\mathcal{A}\) \(=\) \(\{\mathrm{d}a_1, \ldots, \mathrm{d}a_n\},\) in principle just an arbitrary alphabet of symbols, disjoint from the initial alphabet \(\mathcal{A}\) \(=\) \(\{ a_1, \ldots, a_n \},\) that is intended to be interpreted in the way just indicated. It only remains to be understood that the precise interpretation of the symbols in \(\mathrm{d}\mathcal{A}\) is often conceived to be changeable from point to point of the underlying space \(A.\) Indeed, for all we know, the state space \(A\) might well be the state space of a language interpreter, one that is concerned, among other things, with the idiomatic meanings of the dialect generated by \(\mathcal{A}\) and \(\mathrm{d}\mathcal{A}.\)

The tangent space to \(A\) at one of its points \(x,\) sometimes written \(\mathrm{T}_x(A),\) takes the form \(\mathrm{d}A\) \(=\) \(\langle \mathrm{d}\mathcal{A} \rangle\) \(=\) \(\langle \mathrm{d}a_1, \ldots, \mathrm{d}a_n \rangle.\) Strictly speaking, the name cotangent space is probably more correct for this construction, but the fact that we take up spaces and their duals in pairs to form our universes of discourse allows our language to be pliable here.

Proceeding as we did with the base space \(A,\) the tangent space \(\mathrm{d}A\) at a point of \(A\) can be analyzed as a product of distinct and independent factors:

| \(\mathrm{d}A ~=~ \prod_{i=1}^n \mathrm{d}A_i ~=~ \mathrm{d}A_1 \times \ldots \times \mathrm{d}A_n.\) |

Here, \(\mathrm{d}A_i\) is a set of two differential propositions, \(\mathrm{d}A_i = \{ (\mathrm{d}a_i), \mathrm{d}a_i \},\) where \(\texttt{(} \mathrm{d}a_i \texttt{)}\) is a proposition with the logical value of \(\text{not} ~ \mathrm{d}a_i.\) Each component \(\mathrm{d}A_i\) has the type \(\mathbb{B},\) operating under the ordered correspondence \(\{ \texttt{(} \mathrm{d}a_i \texttt{)}, \mathrm{d}a_i \} \cong \{ 0, 1 \}.\) However, clarity is often served by acknowledging this differential usage with a superficially distinct type \(\mathbb{D},\) whose intension may be indicated as follows:

| \(\mathbb{D} = \{ \texttt{(} \mathrm{d}a_i \texttt{)}, \mathrm{d}a_i \} = \{ \text{same}, \text{different} \} = \{ \text{stay}, \text{change} \} = \{ \text{stop}, \text{step} \}.\) |

Viewed within a coordinate representation, spaces of type \(\mathbb{B}^n\) and \(\mathbb{D}^n\) may appear to be identical sets of binary vectors, but taking a view at this level of abstraction would be like ignoring the qualitative units and the diverse dimensions that distinguish position and momentum, or the different roles of quantity and impulse.

An Interlude on the Path

|

There would have been no beginnings: instead, speech would proceed from me, while I stood in its path – a slender gap – the point of its possible disappearance. |

||

| — Michel Foucault, The Discourse on Language, [Fou, 215] | ||

A sense of the relation between \(\mathbb{B}\) and \(\mathbb{D}\) may be obtained by considering the path classifier (or the equivalence class of curves) approach to tangent vectors. Consider a universe \([\mathcal{X}].\) Given the boolean value system, a path in the space \(X = \langle \mathcal{X} \rangle\) is a map \(q : \mathbb{B} \to X.\) In this context the set of paths \((\mathbb{B} \to X)\) is isomorphic to the cartesian square \(X^2 = X \times X,\) or the set of ordered pairs chosen from \(X.\)

We may analyze \(X^2 = \{ (u, v) : u, v \in X \}\) into two parts, specifically, the ordered pairs \((u, v)\) that lie on and off the diagonal:

| \(\begin{matrix}X^2 & = & \{ (u, v) : u = v \} & \cup & \{ (u, v) : u \ne v \}.\end{matrix}\) |

This partition may also be expressed in the following symbolic form:

| \(\begin{matrix}X^2 & \cong & \operatorname{diag} (X) & + & 2 \binom{X}{2}.\end{matrix}\) |

The separate terms of this formula are defined as follows:

| \(\begin{matrix}\operatorname{diag} (X) & = & \{ (x, x) : x \in X \}.\end{matrix}\) |

| \(\begin{matrix}\binom{X}{k} & = & X ~\text{choose}~ k & = & \{ k\text{-sets from}~ X \}.\end{matrix}\) |

Thus we have:

| \(\begin{matrix}\binom{X}{2} & = & \{ \{ u, v \} : u, v \in X \}.\end{matrix}\) |

We may now use the features in \(\mathrm{d}\mathcal{X} = \{ \mathrm{d}x_i \} = \{ \mathrm{d}x_1, \ldots, \mathrm{d}x_n \}\) to classify the paths of \((\mathbb{B} \to X)\) by way of the pairs in \(X^2.\) If \(X \cong \mathbb{B}^n,\) then a path \(q\) in \(X\) has the following form:

|

\(\begin{matrix} q : (\mathbb{B} \to \mathbb{B}^n) & \cong & \mathbb{B}^n \times \mathbb{B}^n & \cong & \mathbb{B}^{2n} & \cong & (\mathbb{B}^2)^n. \end{matrix}\) |

Intuitively, we want to map this \((\mathbb{B}^2)^n\) onto \(\mathbb{D}^n\) by mapping each component \(\mathbb{B}^2\) onto a copy of \(\mathbb{D}.\) But in the presenting context \({}^{\backprime\backprime} \mathbb{D} {}^{\prime\prime}\) is just a name associated with, or an incidental quality attributed to, coefficient values in \(\mathbb{B}\) when they are attached to features in \(\mathrm{d}\mathcal{X}.\)

Taking these intentions into account, define \(\mathrm{d}x_i : X^2 \to \mathbb{B}\) in the following manner:

|

\(\begin{array}{lcrcl} \mathrm{d}x_i(u, v) & = & \texttt{(} ~ x_i(u) & \texttt{,} & x_i(v) ~ \texttt{)} \\ & = & x_i(u) & + & x_i(v) \\ & = & x_i(v) & - & x_i(u). \end{array}\) |

In the above transcription, the operator bracket of the form \(\texttt{(} \ldots \texttt{,} \ldots \texttt{)}\) is a cactus lobe, in general signifying that just one of the arguments listed is false. In the case of two arguments this is the same thing as saying that the arguments are not equal. The plus sign signifies boolean addition, in the sense of addition in \(\mathrm{GF}(2),\) and thus means the same thing in this context as the minus sign, in the sense of adding the additive inverse.

The above definition of \(\mathrm{d}x_i : X^2 \to \mathbb{B}\) is equivalent to defining \(\mathrm{d}x_i : (\mathbb{B} \to X) \to \mathbb{B}\) in the following way:

|

\(\begin{array}{lcrcl} \mathrm{d}x_i (q) & = & \texttt{(} ~ x_i(q_0) & \texttt{,} & x_i(q_1) ~ \texttt{)} \\ & = & x_i(q_0) & + & x_i(q_1) \\ & = & x_i(q_1) & - & x_i(q_0). \end{array}\) |

In this definition \(q_b = q(b),\) for each \(b\) in \(\mathbb{B}.\) Thus, the proposition \(\mathrm{d}x_i\) is true of the path \(q = (u, v)\) exactly if the terms of \(q,\) the endpoints \(u\) and \(v,\) lie on different sides of the question \(x_i.\)

The language of features in \(\langle \mathrm{d}\mathcal{X} \rangle,\) indeed the whole calculus of propositions in \([\mathrm{d}\mathcal{X}],\) may now be used to classify paths and sets of paths. In other words, the paths can be taken as models of the propositions \(g : \mathrm{d}X \to \mathbb{B}.\) For example, the paths corresponding to \(\mathrm{diag}(X)\) fall under the description \(\texttt{(} \mathrm{d}x_1 \texttt{)} \cdots \texttt{(} \mathrm{d}x_n \texttt{)},\) which says that nothing changes against the backdrop of the coordinate frame \(\{ x_1, \ldots, x_n \}.\)

Finally, a few words of explanation may be in order. If this concept of a path appears to be described in a roundabout fashion, it is because I am trying to avoid using any assumption of vector space properties for the space \(X\) that contains its range. In many ways the treatment is still unsatisfactory, but improvements will have to wait for the introduction of substitution operators acting on singular propositions.

The Extended Universe of Discourse

|

At the moment of speaking, I would like to have perceived a nameless voice, long preceding me, leaving me merely to enmesh myself in it, taking up its cadence, and to lodge myself, when no one was looking, in its interstices as if it had paused an instant, in suspense, to beckon to me. |

||

| — Michel Foucault, The Discourse on Language, [Fou, 215] | ||

Next we define the extended alphabet or bundled alphabet \(\mathrm{E}\mathcal{A}\) as follows:

|

\(\begin{array}{lclcl} \mathrm{E}\mathcal{A} & = & \mathcal{A} \cup \mathrm{d}\mathcal{A} & = & \{ a_1, \ldots, a_n, \mathrm{d}a_1, \ldots, \mathrm{d}a_n \}. \end{array}\) |

This supplies enough material to construct the differential extension \(\mathrm{E}A,\) or the tangent bundle over the initial space \(A,\) in the following fashion:

|

\(\begin{array}{lcl} \mathrm{E}A & = & \langle \mathrm{E}\mathcal{A} \rangle \\[4pt] & = & \langle \mathcal{A} \cup \mathrm{d}\mathcal{A} \rangle \\[4pt] & = & \langle a_1, \ldots, a_n, \mathrm{d}a_1, \ldots, \mathrm{d}a_n \rangle, \end{array}\) |

and also:

|

\(\begin{array}{lcl} \mathrm{E}A & = & A \times \mathrm{d}A \\[4pt] & = & A_1 \times \ldots \times A_n \times \mathrm{d}A_1 \times \ldots \times \mathrm{d}A_n. \end{array}\) |

This gives \(\mathrm{E}A\) the type \(\mathbb{B}^n \times \mathbb{D}^n.\)

Finally, the tangent universe \(\mathrm{E}A^\bullet = [\mathrm{E}\mathcal{A}]\) is constituted from the totality of points and maps, or interpretations and propositions, that are based on the extended set of features \(\mathrm{E}\mathcal{A},\) and this fact is summed up in the following notation:

|

\(\begin{array}{lclcl} \mathrm{E}A^\bullet & = & [\mathrm{E}\mathcal{A}] & = & [a_1, \ldots, a_n, \mathrm{d}a_1, \ldots, \mathrm{d}a_n]. \end{array}\) |

This gives the tangent universe \(\mathrm{E}A^\bullet\) the type:

|

\(\begin{array}{lcl} (\mathbb{B}^n \times \mathbb{D}^n\ +\!\to \mathbb{B}) & = & (\mathbb{B}^n \times \mathbb{D}^n, (\mathbb{B}^n \times \mathbb{D}^n \to \mathbb{B})). \end{array}\) |

A proposition in the tangent universe \([\mathrm{E}\mathcal{A}]\) is called a differential proposition and forms the analogue of a system of differential equations, constraints, or relations in ordinary calculus.

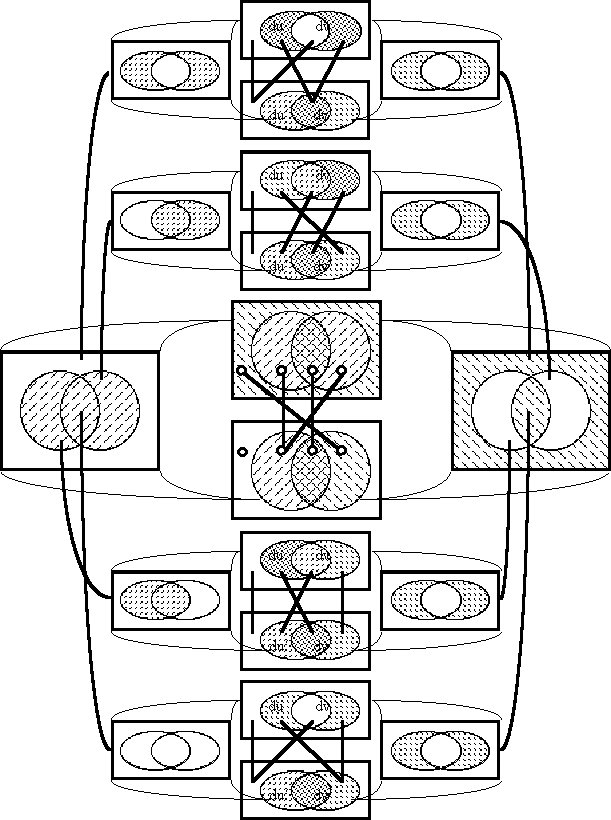

With these constructions, the differential extension \(\mathrm{E}A\) and the space of differential propositions \((\mathrm{E}A \to \mathbb{B}),\) we have arrived, in main outline, at one of the major subgoals of this study. Table 8 summarizes the concepts that have been introduced for working with differentially extended universes of discourse.

| \(\text{Symbol}\) | \(\text{Notation}\) | \(\text{Description}\) | \(\text{Type}\) |

| \(\mathrm{d}\mathfrak{A}\) | \(\{ {}^{\backprime\backprime} \mathrm{d}a_1 {}^{\prime\prime}, \ldots, {}^{\backprime\backprime} \mathrm{d}a_n {}^{\prime\prime} \}\) |

\(\begin{matrix} \text{Alphabet of} \\[2pt] \text{differential symbols} \end{matrix}\) |

\([n] = \mathbf{n}\) |

| \(\mathrm{d}\mathcal{A}\) | \(\{ \mathrm{d}a_1, \ldots, \mathrm{d}a_n \}\) |

\(\begin{matrix} \text{Basis of} \\[2pt] \text{differential features} \end{matrix}\) |

\([n] = \mathbf{n}\) |

| \(\mathrm{d}A_i\) | \(\{ \texttt{(} \mathrm{d}a_i \texttt{)}, \mathrm{d}a_i \}\) | \(\text{Differential dimension}~ i\) | \(\mathbb{D}\) |

| \(\mathrm{d}A\) |

\(\begin{matrix} \langle \mathrm{d}\mathcal{A} \rangle \\[2pt] \langle \mathrm{d}a_1, \ldots, \mathrm{d}a_n \rangle \\[2pt] \{ (\mathrm{d}a_1, \ldots, \mathrm{d}a_n) \} \\[2pt] \mathrm{d}A_1 \times \ldots \times \mathrm{d}A_n \\[2pt] \textstyle \prod_i \mathrm{d}A_i \end{matrix}\) |

\(\begin{matrix} \text{Tangent space at a point:} \\[2pt] \text{Set of changes, motions,} \\[2pt] \text{steps, tangent vectors} \\[2pt] \text{at a point} \end{matrix}\) |

\(\mathbb{D}^n\) |

| \(\mathrm{d}A^*\) | \((\mathrm{hom} : \mathrm{d}A \to \mathbb{B})\) | \(\text{Linear functions on}~ \mathrm{d}A\) | \((\mathbb{D}^n)^* \cong \mathbb{D}^n\) |

| \(\mathrm{d}A^\uparrow\) | \((\mathrm{d}A \to \mathbb{B})\) | \(\text{Boolean functions on}~ \mathrm{d}A\) | \(\mathbb{D}^n \to \mathbb{B}\) |

| \(\mathrm{d}A^\bullet\) |

\(\begin{matrix} [\mathrm{d}\mathcal{A}] \\[2pt] (\mathrm{d}A, \mathrm{d}A^\uparrow) \\[2pt] (\mathrm{d}A ~+\!\to \mathbb{B}) \\[2pt] (\mathrm{d}A, (\mathrm{d}A \to \mathbb{B})) \\[2pt] [\mathrm{d}a_1, \ldots, \mathrm{d}a_n] \end{matrix}\) |

\(\begin{matrix} \text{Tangent universe at a point of}~ A^\bullet, \\[2pt] \text{based on the tangent features} \\[2pt] \{ \mathrm{d}a_1, \ldots, \mathrm{d}a_n \} \end{matrix}\) |

\(\begin{matrix} (\mathbb{D}^n, (\mathbb{D}^n \to \mathbb{B})) \\[2pt] (\mathbb{D}^n ~+\!\to \mathbb{B}) \\[2pt] [\mathbb{D}^n] \end{matrix}\) |

The adjectives differential or tangent are systematically attached to every construct based on the differential alphabet \(\mathrm{d}\mathfrak{A},\) taken by itself. Strictly speaking, we probably ought to call \(\mathrm{d}\mathcal{A}\) the set of cotangent features derived from \(\mathcal{A},\) but the only time this distinction really seems to matter is when we need to distinguish the tangent vectors as maps of type \((\mathbb{B}^n \to \mathbb{B}) \to \mathbb{B}\) from cotangent vectors as elements of type \(\mathbb{D}^n.\) In like fashion, having defined \(\mathrm{E}\mathcal{A} = \mathcal{A} \cup \mathrm{d}\mathcal{A},\) we can systematically attach the adjective extended or the substantive bundle to all of the constructs associated with this full complement of \({2n}\) features.

It eventually becomes necessary to extend the initial alphabet even further, to allow for the discussion of higher order differential expressions. Table 9 provides a suggestion of how these further extensions can be carried out.

|

\(\begin{array}{lllll} \mathrm{d}^0 \mathcal{A} & = & \{ a_1, \ldots, a_n \} & = & \mathcal{A} \\ \mathrm{d}^1 \mathcal{A} & = & \{ \mathrm{d}a_1, \ldots, \mathrm{d}a_n \} & = & \mathrm{d}\mathcal{A} \end{array}\) \(\begin{array}{lll} \mathrm{d}^k \mathcal{A} & = & \{ \mathrm{d}^k a_1, \ldots, \mathrm{d}^k a_n \} \\ \mathrm{d}^* \mathcal{A} & = & \{ \mathrm{d}^0 \mathcal{A}, \ldots, \mathrm{d}^k \mathcal{A}, \ldots \} \end{array}\) |

\(\begin{array}{lll} \mathrm{E}^0 \mathcal{A} & = & \mathrm{d}^0 \mathcal{A} \\ \mathrm{E}^1 \mathcal{A} & = & \mathrm{d}^0 \mathcal{A} ~\cup~ \mathrm{d}^1 \mathcal{A} \\ \mathrm{E}^k \mathcal{A} & = & \mathrm{d}^0 \mathcal{A} ~\cup~ \ldots ~\cup~ \mathrm{d}^k \mathcal{A} \\ \mathrm{E}^\infty \mathcal{A} & = & \bigcup~ \mathrm{d}^* \mathcal{A} \end{array}\) |

Intentional Propositions

|

Do you guess I have some intricate purpose? | |

| — Walt Whitman, Leaves of Grass, [Whi, 45] |

In order to analyze the behavior of a system at successive moments in time, while staying within the limitations of propositional logic, it is necessary to create independent alphabets of logical features for each moment of time that we contemplate using in our discussion. These moments have reference to typical instances and relative intervals, not actual or absolute times. For example, to discuss velocities (first order rates of change) we need to consider points of time in pairs. There are a number of natural ways of doing this. Given an initial alphabet, we could use its symbols as a lexical basis to generate successive alphabets of compound symbols, say, with temporal markers appended as suffixes.

As a standard way of dealing with these situations, the following scheme of notation suggests a way of extending any alphabet of logical features through as many temporal moments as a particular order of analysis may demand. The lexical operators \(\mathrm{p}^k\) and \(\mathrm{Q}^k\) are convenient in many contexts where the accumulation of prime symbols and union symbols would otherwise be cumbersome.

|

\(\begin{array}{lllll} \mathrm{p}^0 \mathcal{A} & = & \{ a_1, \ldots, a_n \} & = & \mathcal{A} \\ \mathrm{p}^1 \mathcal{A} & = & \{ a_1^\prime, \ldots, a_n^\prime \} & = & \mathcal{A}^\prime \\ \mathrm{p}^2 \mathcal{A} & = & \{ a_1^{\prime\prime}, \ldots, a_n^{\prime\prime} \} & = & \mathcal{A}^{\prime\prime} \\ \cdots & & \cdots & \end{array}\) \(\begin{array}{lll} \mathrm{p}^k \mathcal{A} & = & \{ \mathrm{p}^k a_1, \ldots, \mathrm{p}^k a_n \} \end{array}\) |

\(\begin{array}{lll} \mathrm{Q}^0 \mathcal{A} & = & \mathcal{A} \\ \mathrm{Q}^1 \mathcal{A} & = & \mathcal{A} \cup \mathcal{A}' \\ \mathrm{Q}^2 \mathcal{A} & = & \mathcal{A} \cup \mathcal{A}' \cup \mathcal{A}'' \\ \cdots & & \cdots \\ \mathrm{Q}^k \mathcal{A} & = & \mathcal{A} \cup \mathcal{A}' \cup \ldots \cup \mathrm{p}^k \mathcal{A} \end{array}\) |

The resulting augmentations of our logical basis determine a series of discursive universes that may be called the intentional extension of propositional calculus. This extension follows a pattern analogous to the differential extension, which was developed in terms of the operators \(\mathrm{d}^k\) and \(\mathrm{E}^k,\) and there is a natural relation between these two extensions that bears further examination. In contexts displaying this pattern, where a sequence of domains stretches from an anchoring domain \(X\) through an indefinite number of higher reaches, a particular collection of domains based on \(X\) will be referred to as a realm of \(X,\) and when the succession exhibits a temporal aspect, as a reign of \(X.\)

For the purposes of this discussion, an intentional proposition is defined as a proposition in the universe of discourse \(\mathrm{Q}X^\bullet = [\mathrm{Q}\mathcal{X}],\) in other words, a map \(q : \mathrm{Q}X \to \mathbb{B}.\) The sense of this definition may be seen if we consider the following facts. First, the equivalence \(\mathrm{Q}X = X \times X'\) motivates the following chain of isomorphisms between spaces:

|