Peirce's 1870 Logic Of Relatives

Note. The MathJax parser is not rendering this page properly.

Until it can be fixed please see the InterSciWiki version.

Author: Jon Awbrey

Peirce's text employs lower case letters for logical terms of general reference and upper case letters for logical terms of individual reference. General terms fall into types — absolute terms, dyadic relative terms, higher adic relative terms — and Peirce employs different typefaces to distinguish these. The following Tables indicate the typefaces that are used in the text below for Peirce's examples of general terms.

|

\(\begin{array}{ll} \mathrm{a}. & \text{animal} \\ \mathrm{b}. & \text{black} \\ \mathrm{f}. & \text{Frenchman} \\ \mathrm{h}. & \text{horse} \\ \mathrm{m}. & \text{man} \\ \mathrm{p}. & \text{President of the United States Senate} \\ \mathrm{r}. & \text{rich person} \\ \mathrm{u}. & \text{violinist} \\ \mathrm{v}. & \text{Vice-President of the United States} \\ \mathrm{w}. & \text{woman} \end{array}\) |

|

\(\begin{array}{ll} \mathit{a}. & \text{enemy} \\ \mathit{b}. & \text{benefactor} \\ \mathit{c}. & \text{conqueror} \\ \mathit{e}. & \text{emperor} \\ \mathit{h}. & \text{husband} \\ \mathit{l}. & \text{lover} \\ \mathit{m}. & \text{mother} \\ \mathit{n}. & \text{not} \\ \mathit{o}. & \text{owner} \\ \mathit{s}. & \text{servant} \\ \mathit{w}. & \text{wife} \end{array}\) |

|

\(\begin{array}{ll} \mathfrak{b}. & \text{betrayer to ------ of ------} \\ \mathfrak{g}. & \text{giver to ------ of ------} \\ \mathfrak{t}. & \text{transferrer from ------ to ------} \\ \mathfrak{w}. & \text{winner over of ------ to ------ from ------} \end{array}\) |

Individual terms are taken to denote individual entities falling under a general term. Peirce uses upper case Roman letters for individual terms, for example, the individual horses \(\mathrm{H}, \mathrm{H}^{\prime}, \mathrm{H}^{\prime\prime}\) falling under the general term \(\mathrm{h}\!\) for horse.

The path to understanding Peirce's system and its wider implications for logic can be smoothed by paraphrasing his notations in a variety of contemporary mathematical formalisms, while preserving the semantics as much as possible. Remaining faithful to Peirce's orthography while adding parallel sets of stylistic conventions will, however, demand close attention to typography-in-context. Current style sheets for mathematical texts specify italics for mathematical variables, with upper case letters for sets and lower case letters for individuals. So we need to keep an eye out for the difference between the individual \(\mathrm{X}\!\) of the genus \(\mathrm{x}\!\) and the element \(x\!\) of the set \(X\!\) as we pass between the two styles of text.

Selection 1

Use of the Letters

|

The letters of the alphabet will denote logical signs. Now logical terms are of three grand classes. The first embraces those whose logical form involves only the conception of quality, and which therefore represent a thing simply as “a ——”. These discriminate objects in the most rudimentary way, which does not involve any consciousness of discrimination. They regard an object as it is in itself as such (quale); for example, as horse, tree, or man. These are absolute terms. The second class embraces terms whose logical form involves the conception of relation, and which require the addition of another term to complete the denotation. These discriminate objects with a distinct consciousness of discrimination. They regard an object as over against another, that is as relative; as father of, lover of, or servant of. These are simple relative terms. The third class embraces terms whose logical form involves the conception of bringing things into relation, and which require the addition of more than one term to complete the denotation. They discriminate not only with consciousness of discrimination, but with consciousness of its origin. They regard an object as medium or third between two others, that is as conjugative; as giver of —— to ——, or buyer of —— for —— from ——. These may be termed conjugative terms. The conjugative term involves the conception of third, the relative that of second or other, the absolute term simply considers an object. No fourth class of terms exists involving the conception of fourth, because when that of third is introduced, since it involves the conception of bringing objects into relation, all higher numbers are given at once, inasmuch as the conception of bringing objects into relation is independent of the number of members of the relationship. Whether this reason for the fact that there is no fourth class of terms fundamentally different from the third is satisfactory or not, the fact itself is made perfectly evident by the study of the logic of relatives. (Peirce, CP 3.63). |

I am going to experiment with an interlacing commentary on Peirce's 1870 “Logic of Relatives” paper, revisiting some critical transitions from several different angles and calling attention to a variety of puzzles, problems, and potentials that are not so often remarked or tapped.

What strikes me about the initial installment this time around is its use of a certain pattern of argument that I can recognize as invoking a closure principle, and this is a figure of reasoning that Peirce uses in three other places: his discussion of continuous predicates, his definition of sign relations, and in the pragmatic maxim itself.

One might also call attention to the following two statements:

|

Now logical terms are of three grand classes. |

|

No fourth class of terms exists involving the conception of fourth, because when that of third is introduced, since it involves the conception of bringing objects into relation, all higher numbers are given at once, inasmuch as the conception of bringing objects into relation is independent of the number of members of the relationship. |

Selection 2

Numbers Corresponding to Letters

|

I propose to use the term “universe” to denote that class of individuals about which alone the whole discourse is understood to run. The universe, therefore, in this sense, as in Mr. De Morgan's, is different on different occasions. In this sense, moreover, discourse may run upon something which is not a subjective part of the universe; for instance, upon the qualities or collections of the individuals it contains. I propose to assign to all logical terms, numbers; to an absolute term, the number of individuals it denotes; to a relative term, the average number of things so related to one individual. Thus in a universe of perfect men (men), the number of “tooth of” would be 32. The number of a relative with two correlates would be the average number of things so related to a pair of individuals; and so on for relatives of higher numbers of correlates. I propose to denote the number of a logical term by enclosing the term in square brackets, thus \([t].\!\) (Peirce, CP 3.65). |

Peirce's remarks at CP 3.65 are so replete with remarkable ideas, some of them so taken for granted in mathematical discourse that they usually escape explicit mention, and others so suggestive of things to come in a future remote from his time of writing, and yet so smoothly introduced in passing that it's all too easy to overlook their consequential significance, that I can do no better here than to highlight these ideas in other words, whose main advantage is to be a little more jarring to the mind's sensibilities.

- This mapping of letters to numbers, or logical terms to mathematical quantities, is the very core of what "quantification theory" is all about, and definitely more to the point than the mere "innovation" of using distinctive symbols for the so-called "quantifiers". We will speak of this more later on.

- The mapping of logical terms to numerical measures, to express it in current language, would probably be recognizable as some kind of "morphism" or "functor" from a logical domain to a quantitative co-domain.

- Notice that Peirce follows the mathematician's usual practice, then and now, of making the status of being an "individual" or a "universal" relative to a discourse in progress. I have come to appreciate more and more of late how radically different this "patchwork" or "piecewise" approach to things is from the way of some philosophers who seem to be content with nothing less than many worlds domination, which means that they are never content and rarely get started toward the solution of any real problem. Just my observation, I hope you understand.

- It is worth noting that Peirce takes the "plural denotation" of terms for granted, or what's the number of a term for, if it could not vary apart from being one or nil?

- I also observe that Peirce takes the individual objects of a particular universe of discourse in a "generative" way, not a "totalizing" way, and thus they afford us with the basis for talking freely about collections, constructions, properties, qualities, subsets, and "higher types", as the phrase is mint.

Selection 3

The Signs of Inclusion, Equality, Etc.

|

I shall follow Boole in taking the sign of equality to signify identity. Thus, if \(\mathrm{v}\!\) denotes the Vice-President of the United States, and \(\mathrm{p}~\!\) the President of the Senate of the United States, | |||||||||

| \(\mathrm{v} = \mathrm{p}\!\) | |||||||||

|

means that every Vice-President of the United States is President of the Senate, and every President of the United States Senate is Vice-President. The sign “less than” is to be so taken that | |||||||||

| \(\mathrm{f} < \mathrm{m}~\!\) | |||||||||

|

means that every Frenchman is a man, but there are men besides Frenchmen. Drobisch has used this sign in the same sense. It will follow from these significations of \(=\!\) and \(<\!\) that the sign \(-\!\!\!<\!\) (or \(\leqq\), “as small as”) will mean “is”. Thus, | |||||||||

| \(\mathrm{f} ~-\!\!\!< \mathrm{m}\) | |||||||||

|

means “every Frenchman is a man”, without saying whether there are any other men or not. So, | |||||||||

| \(\mathit{m} ~-\!\!\!< \mathit{l}\) | |||||||||

|

will mean that every mother of anything is a lover of the same thing; although this interpretation in some degree anticipates a convention to be made further on. These significations of \(=\!\) and \(<\!\) plainly conform to the indispensable conditions. Upon the transitive character of these relations the syllogism depends, for by virtue of it, from | |||||||||

| |||||||||

|

that is, from every Frenchman being a man and every man being an animal, that every Frenchman is an animal. But not only do the significations of \(=\!\) and \(<\!\) here adopted fulfill all absolute requirements, but they have the supererogatory virtue of being very nearly the same as the common significations. Equality is, in fact, nothing but the identity of two numbers; numbers that are equal are those which are predicable of the same collections, just as terms that are identical are those which are predicable of the same classes. So, to write \(5 < 7\!\) is to say that \(5\!\) is part of \(7\!\), just as to write \(\mathrm{f} < \mathrm{m}~\!\) is to say that Frenchmen are part of men. Indeed, if \(\mathrm{f} < \mathrm{m}~\!\), then the number of Frenchmen is less than the number of men, and if \(\mathrm{v} = \mathrm{p}\!\), then the number of Vice-Presidents is equal to the number of Presidents of the Senate; so that the numbers may always be substituted for the terms themselves, in case no signs of operation occur in the equations or inequalities. (Peirce, CP 3.66). |

The quantifier mapping from terms to their numbers that Peirce signifies by means of the square bracket notation \([t]\!\) has one of its principal uses in providing a basis for the computation of frequencies, probabilities, and all of the other statistical measures that can be constructed from these, and thus in affording what may be called a principle of correspondence between probability theory and its limiting case in the forms of logic.

This brings us once again to the relativity of contingency and necessity, as one way of approaching necessity is through the avenue of probability, describing necessity as a probability of 1, but the whole apparatus of probability theory only figures in if it is cast against the backdrop of probability space axioms, the reference class of distributions, and the sample space that we cannot help but to abduce upon the scene of observations. Aye, there's the snake eyes. And with them we can see that there is always an irreducible quantum of facticity to all our necessities. More plainly spoken, it takes a fairly complex conceptual infrastructure just to begin speaking of probabilities, and this setting can only be set up by means of abductive, fallible, hypothetical, and inherently risky mental acts.

Pragmatic thinking is the logic of abduction, which is just another way of saying that it addresses the question: “What may be hoped?” We have to face the possibility that it may be just as impossible to speak of “absolute identity” with any hope of making practical philosophical sense as it is to speak of “absolute simultaneity” with any hope of making operational physical sense.

Selection 4

The Signs for Addition

|

The sign of addition is taken by Boole so that |

| \(x + y\!\) |

|

denotes everything denoted by \(x\!\), and, besides, everything denoted by \(y\!\). Thus |

| \(\mathrm{m} + \mathrm{w}~\!\) |

|

denotes all men, and, besides, all women. This signification for this sign is needed for connecting the notation of logic with that of the theory of probabilities. But if there is anything which is denoted by both terms of the sum, the latter no longer stands for any logical term on account of its implying that the objects denoted by one term are to be taken besides the objects denoted by the other. For example, |

| \(\mathrm{f} + \mathrm{u}\!\) |

|

means all Frenchmen besides all violinists, and, therefore, considered as a logical term, implies that all French violinists are besides themselves. For this reason alone, in a paper which is published in the Proceedings of the Academy for March 17, 1867, I preferred to take as the regular addition of logic a non-invertible process, such that |

| \(\mathrm{m} ~+\!\!,~ \mathrm{b}\) |

|

stands for all men and black things, without any implication that the black things are to be taken besides the men; and the study of the logic of relatives has supplied me with other weighty reasons for the same determination. Since the publication of that paper, I have found that Mr. W. Stanley Jevons, in a tract called Pure Logic, or the Logic of Quality [1864], had anticipated me in substituting the same operation for Boole's addition, although he rejects Boole's operation entirely and writes the new one with a \(+\!\) sign while withholding from it the name of addition. It is plain that both the regular non-invertible addition and the invertible addition satisfy the absolute conditions. But the notation has other recommendations. The conception of taking together involved in these processes is strongly analogous to that of summation, the sum of 2 and 5, for example, being the number of a collection which consists of a collection of two and a collection of five. Any logical equation or inequality in which no operation but addition is involved may be converted into a numerical equation or inequality by substituting the numbers of the several terms for the terms themselves — provided all the terms summed are mutually exclusive. Addition being taken in this sense, nothing is to be denoted by zero, for then |

| \(x ~+\!\!,~ 0 ~=~ x\) |

|

whatever is denoted by \(x\!\); and this is the definition of zero. This interpretation is given by Boole, and is very neat, on account of the resemblance between the ordinary conception of zero and that of nothing, and because we shall thus have |

| \([0] ~=~ 0.\) |

|

(Peirce, CP 3.67). |

A wealth of issues arises here that I hope to take up in depth at a later point, but for the moment I shall be able to mention only the barest sample of them in passing.

The two papers that precede this one in CP 3 are Peirce's papers of March and September 1867 in the Proceedings of the American Academy of Arts and Sciences, titled “On an Improvement in Boole's Calculus of Logic” and “Upon the Logic of Mathematics”, respectively. Among other things, these two papers provide us with further clues about the motivating considerations that brought Peirce to introduce the “number of a term” function, signified here by square brackets. I have already quoted from the “Logic of Mathematics” paper in a related connection. Here are the links to those excerpts:

In setting up a correspondence between “letters” and “numbers”, Peirce constructs a structure-preserving map from a logical domain to a numerical domain. That he does this deliberately is evidenced by the care that he takes with the conditions under which the chosen aspects of structure are preserved, along with his recognition of the critical fact that zeroes are preserved by the mapping.

Incidentally, Peirce appears to have an inkling of the problems that would later be caused by using the plus sign for inclusive disjunction, but his advice was overridden by the dialects of applied logic that developed in various communities, retarding the exchange of information among engineering, mathematical, and philosophical specialties all throughout the subsequent century.

Selection 5

The Signs for Multiplication

|

I shall adopt for the conception of multiplication the application of a relation, in such a way that, for example, \(\mathit{l}\mathrm{w}~\!\) shall denote whatever is lover of a woman. This notation is the same as that used by Mr. De Morgan, although he appears not to have had multiplication in his mind. \(\mathit{s}(\mathrm{m} ~+\!\!,~ \mathrm{w})\) will, then, denote whatever is servant of anything of the class composed of men and women taken together. So that: |

| \(\mathit{s}(\mathrm{m} ~+\!\!,~ \mathrm{w}) ~=~ \mathit{s}\mathrm{m} ~+\!\!,~ \mathit{s}\mathrm{w}.\) |

|

\((\mathit{l} ~+\!\!,~ \mathit{s})\mathrm{w}\) will denote whatever is lover or servant to a woman, and: |

| \((\mathit{l} ~+\!\!,~ \mathit{s})\mathrm{w} ~=~ \mathit{l}\mathrm{w} ~+\!\!,~ \mathit{s}\mathrm{w}.\) |

|

\((\mathit{s}\mathit{l})\mathrm{w}\!\) will denote whatever stands to a woman in the relation of servant of a lover, and: |

| \((\mathit{s}\mathit{l})\mathrm{w} ~=~ \mathit{s}(\mathit{l}\mathrm{w}).\) |

|

Thus all the absolute conditions of multiplication are satisfied. The term “identical with ——” is a unity for this multiplication. That is to say, if we denote “identical with ——” by \(\mathit{1}\!\) we have: |

| \(x \mathit{1} ~=~ x ~ ,\) |

|

whatever relative term \(x\!\) may be. For what is a lover of something identical with anything, is the same as a lover of that thing. (Peirce, CP 3.68). |

Peirce in 1870 is five years down the road from the Peirce of 1865–1866 who lectured extensively on the role of sign relations in the logic of scientific inquiry, articulating their involvement in the three types of inference, and inventing the concept of “information” to explain what it is that signs convey in the process. By this time, then, the semiotic or sign relational approach to logic is so implicit in his way of working that he does not always take the trouble to point out its distinctive features at each and every turn. So let's take a moment to draw out a few of these characters.

Sign relations, like any brand of non-trivial 3-adic relations, can become overwhelming to think about once the cardinality of the object, sign, and interpretant domains or the complexity of the relation itself ascends beyond the simplest examples. Furthermore, most of the strategies that we would normally use to control the complexity, like neglecting one of the domains, in effect, projecting the 3-adic sign relation onto one of its 2-adic faces, or focusing on a single ordered triple of the form \((o, s, i)\!\) at a time, can result in our receiving a distorted impression of the sign relation's true nature and structure.

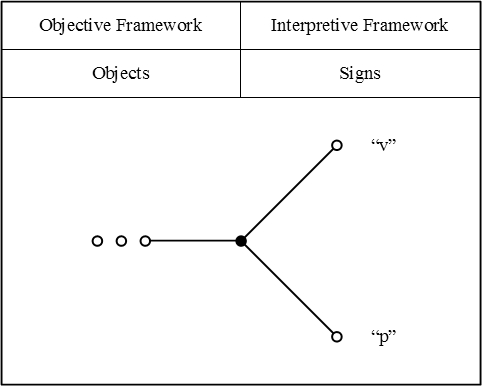

I find that it helps me to draw, or at least to imagine drawing, diagrams of the following form, where I can keep tabs on what's an object, what's a sign, and what's an interpretant sign, for a selected set of sign-relational triples.

Here is how I would picture Peirce's example of equivalent terms, \(\mathrm{v} = \mathrm{p},\!\) where \({}^{\backprime\backprime} \mathrm{v} {}^{\prime\prime}\!\) denotes the Vice-President of the United States, and \({}^{\backprime\backprime} \mathrm{p} {}^{\prime\prime}\!\) denotes the President of the Senate of the United States.

|

| \(\text{Figure 1}~\!\) |

Depending on whether we interpret the terms \({}^{\backprime\backprime} \mathrm{v} {}^{\prime\prime}\!\) and \({}^{\backprime\backprime} \mathrm{p} {}^{\prime\prime}\!\) as applying to persons who hold these offices at one particular time or as applying to all those persons who have held these offices over an extended period of history, their denotations may be either singular of plural, respectively.

As a shortcut technique for indicating general denotations or plural referents, I will use the elliptic convention that represents these by means of figures like “o o o” or “o … o”, placed at the object ends of sign relational triads.

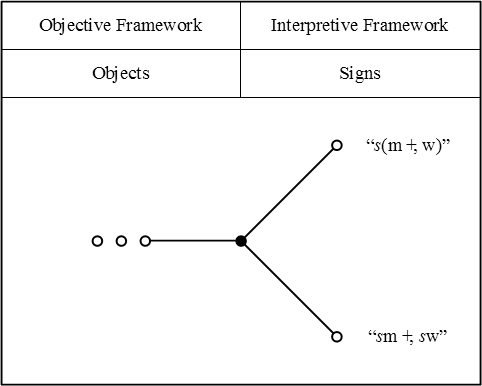

For a more complex example, here is how I would picture Peirce's example of an equivalence between terms that comes about by applying one of the distributive laws, for relative multiplication over absolute summation.

|

| \(\text{Figure 2}\!\) |

Selection 6

The Signs for Multiplication (cont.)

|

A conjugative term like giver naturally requires two correlates, one denoting the thing given, the other the recipient of the gift. We must be able to distinguish, in our notation, the giver of \(\mathrm{A}\!\) to \(\mathrm{B}\!\) from the giver to \(\mathrm{A}\!\) of \(\mathrm{B}\!\), and, therefore, I suppose the signification of the letter equivalent to such a relative to distinguish the correlates as first, second, third, etc., so that “giver of —— to ——” and “giver to —— of ——” will be expressed by different letters. Let \(\mathfrak{g}\) denote the latter of these conjugative terms. Then, the correlates or multiplicands of this multiplier cannot all stand directly after it, as is usual in multiplication, but may be ranged after it in regular order, so that: |

| \(\mathfrak{g}\mathit{x}\mathit{y}\) |

|

will denote a giver to \(\mathit{x}\!\) of \(\mathit{y}\!\). But according to the notation, \(\mathit{x}\!\) here multiplies \(\mathit{y}\!\), so that if we put for \(\mathit{x}\!\) owner (\(\mathit{o}\!\)), and for \(\mathit{y}\!\) horse (\(\mathrm{h}\!\)), |

| \(\mathfrak{g}\mathit{o}\mathrm{h}\) |

|

appears to denote the giver of a horse to an owner of a horse. But let the individual horses be \(\mathrm{H}, \mathrm{H}^{\prime}, \mathrm{H}^{\prime\prime}\), etc. Then: |

| \(\mathrm{h} ~=~ \mathrm{H} ~+\!\!,~ \mathrm{H}^{\prime} ~+\!\!,~ \mathrm{H}^{\prime\prime} ~+\!\!,~ \text{etc.}\) |

| \(\mathfrak{g}\mathit{o}\mathrm{h} ~=~ \mathfrak{g}\mathit{o}(\mathrm{H} ~+\!\!,~ \mathrm{H}^{\prime} ~+\!\!,~ \mathrm{H}^{\prime\prime} ~+\!\!,~ \text{etc.}) ~=~ \mathfrak{g}\mathit{o}\mathrm{H} ~+\!\!,~ \mathfrak{g}\mathit{o}\mathrm{H}^{\prime} ~+\!\!,~ \mathfrak{g}\mathit{o}\mathrm{H}^{\prime\prime} ~+\!\!,~ \text{etc.}\) |

|

Now this last member must be interpreted as a giver of a horse to the owner of that horse, and this, therefore must be the interpretation of \(\mathfrak{g}\mathit{o}\mathrm{h}\). This is always very important. A term multiplied by two relatives shows that the same individual is in the two relations. If we attempt to express the giver of a horse to a lover of a woman, and for that purpose write: |

| \(\mathfrak{g}\mathit{l}\mathrm{w}\mathrm{h}\), |

|

we have written giver of a woman to a lover of her, and if we add brackets, thus, |

| \(\mathfrak{g}(\mathit{l}\mathrm{w})\mathrm{h}\), |

|

we abandon the associative principle of multiplication. A little reflection will show that the associative principle must in some form or other be abandoned at this point. But while this principle is sometimes falsified, it oftener holds, and a notation must be adopted which will show of itself when it holds. We already see that we cannot express multiplication by writing the multiplicand directly after the multiplier; let us then affix subjacent numbers after letters to show where their correlates are to be found. The first number shall denote how many factors must be counted from left to right to reach the first correlate, the second how many more must be counted to reach the second, and so on. Then, the giver of a horse to a lover of a woman may be written: |

| \(\mathfrak{g}_{12} \mathit{l}_1 \mathrm{w} \mathrm{h} ~=~ \mathfrak{g}_{11} \mathit{l}_2 \mathrm{h} \mathrm{w} ~=~ \mathfrak{g}_{2(-1)} \mathrm{h} \mathit{l}_1 \mathrm{w}\). |

|

Of course a negative number indicates that the former correlate follows the latter by the corresponding positive number. A subjacent zero makes the term itself the correlate. Thus, |

| \(\mathit{l}_0\!\) |

|

denotes the lover of that lover or the lover of himself, just as \(\mathfrak{g}\mathit{o}\mathrm{h}\) denotes that the horse is given to the owner of itself, for to make a term doubly a correlate is, by the distributive principle, to make each individual doubly a correlate, so that: |

| \(\mathit{l}_0 ~=~ \mathit{L}_0 ~+\!\!,~ \mathit{L}_0^{\prime} ~+\!\!,~ \mathit{L}_0^{\prime\prime} ~+\!\!,~ \text{etc.}\) |

|

A subjacent sign of infinity may indicate that the correlate is indeterminate, so that: |

| \(\mathit{l}_\infty\) |

|

will denote a lover of something. We shall have some confirmation of this presently. If the last subjacent number is a one it may be omitted. Thus we shall have: |

| \(\mathit{l}_1 ~=~ \mathit{l}\), |

| \(\mathfrak{g}_{11} ~=~ \mathfrak{g}_1 ~=~ \mathfrak{g}\). |

|

This enables us to retain our former expressions \(\mathit{l}\mathrm{w}~\!\), \(\mathfrak{g}\mathit{o}\mathrm{h}\), etc. (Peirce, CP 3.69–70). |

Comment : Sets as Logical Sums

Peirce's way of representing sets as logical sums may seem archaic, but it is quite often used, and is actually the tool of choice in many branches of algebra, combinatorics, computing, and statistics to this very day.

Peirce's application to logic is fairly novel, and the degree of his elaboration of the logic of relative terms is certainly original with him, but this particular genre of representation, commonly going under the handle of generating functions, goes way back, well before anyone thought to stick a flag in set theory as a separate territory or to try to fence off our native possessions of it with expressly decreed axioms. And back in the days when a computer was just a person who computed, before we had the sorts of electronic register machines that we take so much for granted today, mathematicians were constantly using generating functions as a rough and ready type of addressable memory to sort, store, and keep track of their accounts of a wide variety of formal objects of thought.

Let us look at a few simple examples of generating functions, much as I encountered them during my own first adventures in the Fair Land Of Combinatoria.

Suppose that we are given a set of three elements, say, \(\{ a, b, c \},\!\) and we are asked to find all the ways of choosing a subset from this collection.

We can represent this problem setup as the problem of computing the following product:

| \((1 + a)(1 + b)(1 + c).\!\) |

The factor \((1 + a)\!\) represents the option that we have, in choosing a subset of \(\{ a, b, c \},\!\) to leave the element \(a\!\) out (signified by the \(1\!\)), or else to include it (signified by the \(a\!\)), and likewise for the other elements \(b\!\) and \(c\!\) in their turns.

Probably on account of all those years I flippered away playing the oldtime pinball machines, I tend to imagine a product like this being displayed in a vertical array:

|

\(\begin{matrix} (1 ~+~ a) \\ (1 ~+~ b) \\ (1 ~+~ c) \end{matrix}\) |

I picture this as a playboard with six bumpers, the ball chuting down the board in such a career that it strikes exactly one of the two bumpers on each and every one of the three levels.

So a trajectory of the ball where it hits the \(a\!\) bumper on the 1st level, hits the \(1\!\) bumper on the 2nd level, hits the \(c\!\) bumper on the 3rd level, and then exits the board, represents a single term in the desired product and corresponds to the subset \(\{ a, c \}.\!\)

Multiplying out the product \((1 + a)(1 + b)(1 + c),\!\) one obtains:

|

\(\begin{array}{*{15}{c}} 1 & + & a & + & b & + & c & + & ab & + & ac & + & bc & + & abc. \end{array}\) |

And this informs us that the subsets of choice are:

|

\(\begin{matrix} \varnothing, & \{ a \}, & \{ b \}, & \{ c \}, & \{ a, b \}, & \{ a, c \}, & \{ b, c \}, & \{ a, b, c \}. \end{matrix}\) |

Selection 7

The Signs for Multiplication (cont.)

|

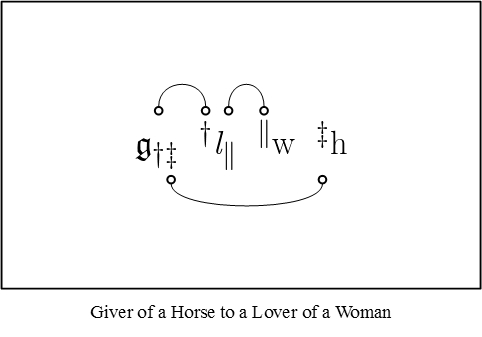

The associative principle does not hold in this counting of factors. Because it does not hold, these subjacent numbers are frequently inconvenient in practice, and I therefore use also another mode of showing where the correlate of a term is to be found. This is by means of the marks of reference, \(\dagger ~ \ddagger ~ \parallel ~ \S ~ \P\), which are placed subjacent to the relative term and before and above the correlate. Thus, giver of a horse to a lover of a woman may be written: |

| \(\mathfrak{g}_{\dagger\ddagger} \, ^\dagger\mathit{l}_\parallel \, ^\parallel\mathrm{w} \, ^\ddagger\mathrm{h}\) |

|

The asterisk I use exclusively to refer to the last correlate of the last relative of the algebraic term. Now, considering the order of multiplication to be: — a term, a correlate of it, a correlate of that correlate, etc. — there is no violation of the associative principle. The only violations of it in this mode of notation are that in thus passing from relative to correlate, we skip about among the factors in an irregular manner, and that we cannot substitute in such an expression as \(\mathfrak{g}\mathit{o}\mathrm{h}\) a single letter for \(\mathit{o}\mathrm{h}.\!\) I would suggest that such a notation may be found useful in treating other cases of non-associative multiplication. By comparing this with what was said above [in CP 3.55] concerning functional multiplication, it appears that multiplication by a conjugative term is functional, and that the letter denoting such a term is a symbol of operation. I am therefore using two alphabets, the Greek and Kennerly, where only one was necessary. But it is convenient to use both. (Peirce, CP 3.71–72). |

Comment : Proto-Graphical Syntax

It is clear from our last excerpt that Peirce is already on the verge of a graphical syntax for the logic of relatives. Indeed, it seems likely that he had already reached this point in his own thinking.

For instance, it seems quite impossible to read his last variation on the theme of a “giver of a horse to a lover of a woman” without drawing lines of identity to connect up the corresponding marks of reference, like this:

|

(3) |

Selection 8

The Signs for Multiplication (cont.)

|

Thus far, we have considered the multiplication of relative terms only. Since our conception of multiplication is the application of a relation, we can only multiply absolute terms by considering them as relatives. Now the absolute term “man” is really exactly equivalent to the relative term “man that is ——”, and so with any other. I shall write a comma after any absolute term to show that it is so regarded as a relative term. Then “man that is black” will be written: |

| \(\mathrm{m},\!\mathrm{b}\!\) |

|

But not only may any absolute term be thus regarded as a relative term, but any relative term may in the same way be regarded as a relative with one correlate more. It is convenient to take this additional correlate as the first one. Then: |

| \(\mathit{l},\!\mathit{s}\mathrm{w}\) |

|

will denote a lover of a woman that is a servant of that woman. The comma here after \(\mathit{l}\!\) should not be considered as altering at all the meaning of \(\mathit{l}\!\), but as only a subjacent sign, serving to alter the arrangement of the correlates. In point of fact, since a comma may be added in this way to any relative term, it may be added to one of these very relatives formed by a comma, and thus by the addition of two commas an absolute term becomes a relative of two correlates. So: |

| \(\mathrm{m},\!,\!\mathrm{b},\!\mathrm{r}\) |

|

interpreted like |

| \(\mathfrak{g}\mathit{o}\mathrm{h}\) |

|

means a man that is a rich individual and is a black that is that rich individual. But this has no other meaning than: |

| \(\mathrm{m},\!\mathrm{b},\!\mathrm{r}\) |

|

or a man that is a black that is rich. Thus we see that, after one comma is added, the addition of another does not change the meaning at all, so that whatever has one comma after it must be regarded as having an infinite number. If, therefore, \(\mathit{l},\!,\!\mathit{s}\mathrm{w}\) is not the same as \(\mathit{l},\!\mathit{s}\mathrm{w}\) (as it plainly is not, because the latter means a lover and servant of a woman, and the former a lover of and servant of and same as a woman), this is simply because the writing of the comma alters the arrangement of the correlates. And if we are to suppose that absolute terms are multipliers at all (as mathematical generality demands that we should}, we must regard every term as being a relative requiring an infinite number of correlates to its virtual infinite series “that is —— and is —— and is —— etc.” Now a relative formed by a comma of course receives its subjacent numbers like any relative, but the question is, What are to be the implied subjacent numbers for these implied correlates? Any term may be regarded as having an infinite number of factors, those at the end being ones, thus: |

| \(\mathit{l},\!\mathit{s}\mathrm{w} ~=~ \mathit{l},\!\mathit{s}\mathit{w},\!\mathit{1},\!\mathit{1},\!\mathit{1},\!\mathit{1},\!\mathit{1},\!\mathit{1},\!\mathit{1}, ~\text{etc.}\) |

|

A subjacent number may therefore be as great as we please. But all these ones denote the same identical individual denoted by \(\mathrm{w}\!\); what then can be the subjacent numbers to be applied to \(\mathit{s}\!\), for instance, on account of its infinite “that is”'s? What numbers can separate it from being identical with \(\mathrm{w}\!\)? There are only two. The first is zero, which plainly neutralizes a comma completely, since |

| \(\mathit{s},_0\!\mathrm{w} ~=~ \mathit{s}\mathrm{w}\) |

|

and the other is infinity; for as \(1^\infty\) is indeterminate in ordinary algbra, so it will be shown hereafter to be here, so that to remove the correlate by the product of an infinite series of ones is to leave it indeterminate. Accordingly, |

| \(\mathrm{m},_\infty\) |

|

should be regarded as expressing some man. Any term, then, is properly to be regarded as having an infinite number of commas, all or some of which are neutralized by zeros. “Something” may then be expressed by: |

| \(\mathit{1}_\infty\!\) |

|

I shall for brevity frequently express this by an antique figure one \((\mathfrak{1}).\) “Anything” by: |

| \(\mathit{1}_0\!\) |

|

I shall often also write a straight \(1\!\) for anything. (Peirce, CP 3.73). |

Commentary Note 8.1

To my way of thinking, CP 3.73 is one of the most remarkable passages in the history of logic. In this first pass over its deeper contents I won't be able to accord it much more than a superficial dusting off.

Let us imagine a concrete example that will serve in developing the uses of Peirce's notation. Entertain a discourse whose universe \(X\!\) will remind us a little of the cast of characters in Shakespeare's Othello.

| \(X ~=~ \{ \mathrm{Bianca}, \mathrm{Cassio}, \mathrm{Clown}, \mathrm{Desdemona}, \mathrm{Emilia}, \mathrm{Iago}, \mathrm{Othello} \}.\) |

The universe \(X\!\) is “that class of individuals about which alone the whole discourse is understood to run” but its marking out for special recognition as a universe of discourse in no way rules out the possibility that “discourse may run upon something which is not a subjective part of the universe; for instance, upon the qualities or collections of the individuals it contains” (CP 3.65).

In order to provide ourselves with the convenience of abbreviated terms, while preserving Peirce's conventions about capitalization, we may use the alternate names \(^{\backprime\backprime}\mathrm{u}^{\prime\prime}\) for the universe \(X\!\) and \(^{\backprime\backprime}\mathrm{Jeste}^{\prime\prime}\) for the character \(\mathrm{Clown}.~\!\) This permits the above description of the universe of discourse to be rewritten in the following fashion:

| \(\mathrm{u} ~=~ \{ \mathrm{B}, \mathrm{C}, \mathrm{D}, \mathrm{E}, \mathrm{I}, \mathrm{J}, \mathrm{O} \}\) |

This specification of the universe of discourse could be summed up in Peirce's notation by the following equation:

|

\(\begin{array}{*{15}{c}} \mathbf{1} & = & \mathrm{B} & +\!\!, & \mathrm{C} & +\!\!, & \mathrm{D} & +\!\!, & \mathrm{E} & +\!\!, & \mathrm{I} & +\!\!, & \mathrm{J} & +\!\!, & \mathrm{O} \end{array}\) |

Within this discussion, then, the individual terms are as follows:

|

\(\begin{matrix} ^{\backprime\backprime}\mathrm{B}^{\prime\prime}, & ^{\backprime\backprime}\mathrm{C}^{\prime\prime}, & ^{\backprime\backprime}\mathrm{D}^{\prime\prime}, & ^{\backprime\backprime}\mathrm{E}^{\prime\prime}, & ^{\backprime\backprime}\mathrm{I}^{\prime\prime}, & ^{\backprime\backprime}\mathrm{J}^{\prime\prime}, & ^{\backprime\backprime}\mathrm{O}^{\prime\prime} \end{matrix}\) |

Each of these terms denotes in a singular fashion the corresponding individual in \(X.\!\)

By way of general terms in this discussion, we may begin with the following set:

|

\(\begin{array}{ccl} ^{\backprime\backprime}\mathrm{b}^{\prime\prime} & = & ^{\backprime\backprime}\mathrm{black}^{\prime\prime} \'"`UNIQ-MathJax1-QINU`"' <br> {| align="center" cellpadding="10" | [[Image:LOR 1870 Figure 7.0.jpg]] || (7) |} {| align="center" cellpadding="10" | [[Image:LOR 1870 Figure 8.0.jpg]] || (8) |} One way to approach the problem of “information fusion” in Peirce's syntax is to soften the distinction between adjacent terms and subjacent signs and to treat the types of constraints that they separately signify more on a par with each other. To that purpose, I will set forth a way of thinking about relational composition that emphasizes the set-theoretic constraints involved in the construction of a composite. For example, suppose that we are given the relations \(L \subseteq X \times Y\) and \(M \subseteq Y \times Z.\) Table 9 and Figure 10 present two ways of picturing the constraints that are involved in constructing the relational composition \(L \circ M \subseteq X \times Z.\)

The way to read Table 9 is to imagine that you are playing a game that involves placing tokens on the squares of a board that is marked in just this way. The rules are that you have to place a single token on each marked square in the middle of the board in such a way that all of the indicated constraints are satisfied. That is to say, you have to place a token whose denomination is a value in the set \(X\!\) on each of the squares marked \({}^{\backprime\backprime} X {}^{\prime\prime},\) and similarly for the squares marked \({}^{\backprime\backprime} Y {}^{\prime\prime}\) and \({}^{\backprime\backprime} Z {}^{\prime\prime},\) meanwhile leaving all of the blank squares empty. Furthermore, the tokens placed in each row and column have to obey the relational constraints that are indicated at the heads of the corresponding row and column. Thus, the two tokens from \(X\!\) have to denominate the very same value from \(X,\!\) and likewise for \(Y\!\) and \(Z,\!\) while the pairs of tokens on the rows marked \({}^{\backprime\backprime} L {}^{\prime\prime}\) and \({}^{\backprime\backprime} M {}^{\prime\prime}\) are required to denote elements that are in the relations \(L\!\) and \(M,\!\) respectively. The upshot is that when just this much is done, that is, when the \(L,\!\) \(M,\!\) and \(\mathit{1}\!\) relations are satisfied, then the row marked \({}^{\backprime\backprime} L \circ M {}^{\prime\prime}\) will automatically bear the tokens of a pair of elements in the composite relation \(L \circ M.\!\) Figure 10 shows a different way of viewing the same situation.

Commentary Note 10.3I will devote some time to drawing out the relationships that exist among the different pictures of relations and relative terms that were shown above, or as redrawn here:

Figures 11 and 12 present examples of relative multiplication in one of the styles of syntax that Peirce used, to which I added lines of identity to connect the corresponding marks of reference. These pictures are adapted to showing the anatomy of relative terms, while the forms of analysis illustrated in Table 13 and Figure 14 are designed to highlight the structures of the objective relations themselves.

There are many ways that Peirce might have gotten from his 1870 Notation for the Logic of Relatives to his more evolved systems of Logical Graphs. It is interesting to speculate on how the metamorphosis might have been accomplished by way of transformations that act on these nascent forms of syntax and that take place not too far from the pale of its means, that is, as nearly as possible according to the rules and the permissions of the initial system itself. In Existential Graphs, a relation is represented by a node whose degree is the adicity of that relation, and which is adjacent via lines of identity to the nodes that represent its correlative relations, including as a special case any of its terminal individual arguments. In the 1870 Logic of Relatives, implicit lines of identity are invoked by the subjacent numbers and marks of reference only when a correlate of some relation is the relate of some relation. Thus, the principal relate, which is not a correlate of any explicit relation, is not singled out in this way. Remarkably enough, the comma modifier itself provides us with a mechanism to abstract the logic of relations from the logic of relatives, and thus to forge a possible link between the syntax of relative terms and the more graphical depiction of the objective relations themselves. Figure 15 demonstrates this possibility, posing a transitional case between the style of syntax in Figure 11 and the picture of composition in Figure 14.

In this composite sketch the diagonal extension \(\mathit{1}\!\) of the universe \(\mathbf{1}\!\) is invoked up front to anchor an explicit line of identity for the leading relate of the composition, while the terminal argument \(\mathrm{w}\!\) has been generalized to the whole universe \(\mathbf{1},\!\) in effect, executing an act of abstraction. This type of universal bracketing isolates the composing of the relations \(L\!\) and \(S\!\) to form the composite \(L \circ S.\!\) The three relational domains \(X, Y, Z\!\) may be distinguished from one another, or else rolled up into a single universe of discourse, as one prefers. Commentary Note 10.4From now on I will use the forms of analysis exemplified in the last set of Figures and Tables as a routine bridge between the logic of relative terms and the logic of their extended relations. For future reference, we may think of Table 13 as illustrating the spreadsheet model of relational composition, while Figure 14 may be thought of as making a start toward a hypergraph model of generalized compositions. I will explain the hypergraph model in some detail at a later point. The transitional form of analysis represented by Figure 15 may be called the universal bracketing of relatives as relations. Commentary Note 10.5We have sufficiently covered the application of the comma functor, or the diagonal extension, to absolute terms, so let us return to where we were in working our way through CP 3.73 and see whether we can validate Peirce's statements about the “commifications” of 2-adic relative terms that yield their 3-adic diagonal extensions.

Just to plant our feet on a more solid stage, let's apply this idea to the Othello example. For this performance only, just to make the example more interesting, let us assume that \(\mathrm{Jeste ~ (J)}\!\) is secretly in love with \(\mathrm{Desdemona ~ (D)}.\!\) Then we begin with the modified data set:

|

- Pages with broken file links

- Artificial Intelligence

- Charles Sanders Peirce

- Critical Thinking

- Cybernetics

- Education

- Hermeneutics

- Information Systems

- Inquiry

- Intelligence Amplification

- Learning Organizations

- Knowledge Representation

- Logic

- Logic Of Relatives

- Logical Graphs

- Mathematics

- Normative Sciences

- Philosophy

- Pragmatics

- Pragmatism

- Relation Theory

- Science

- Semantics

- Semiotics

- Systems Science

- Visualization